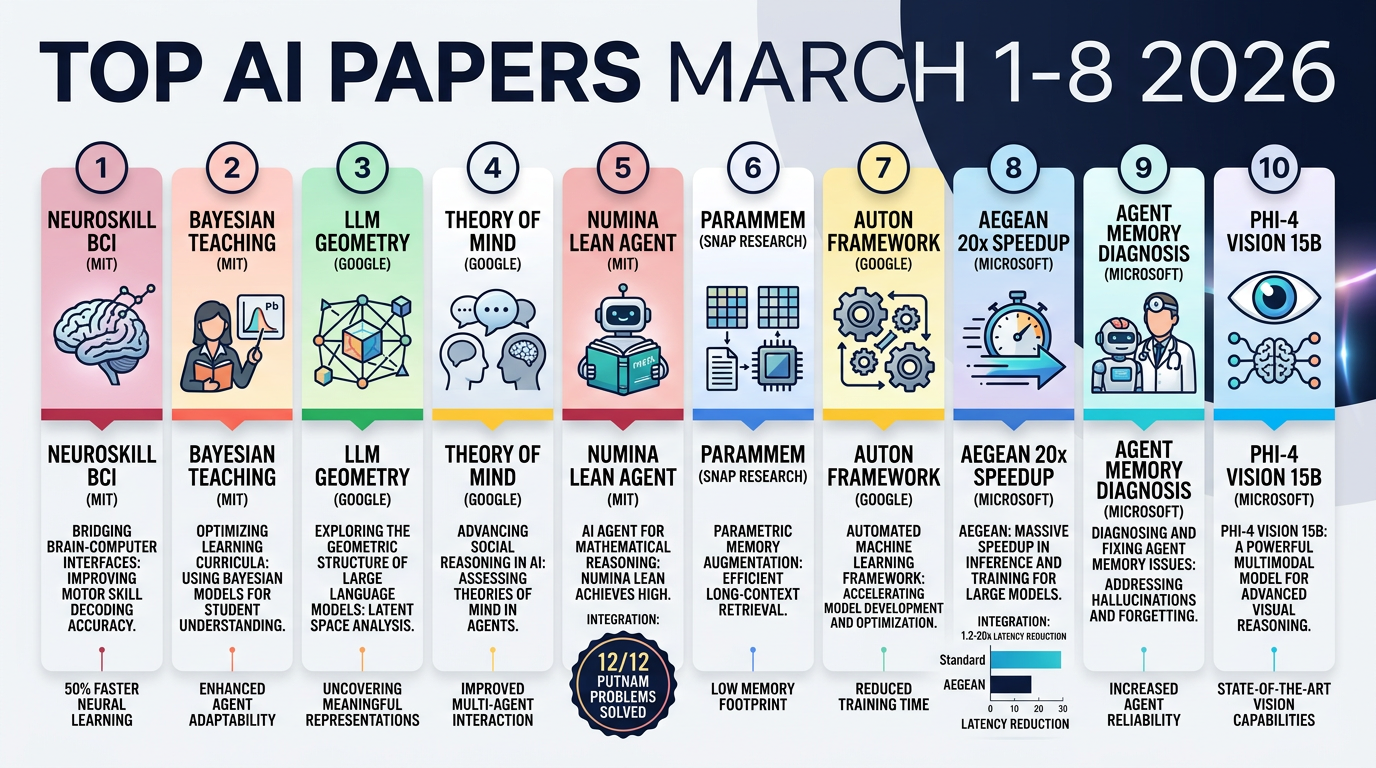

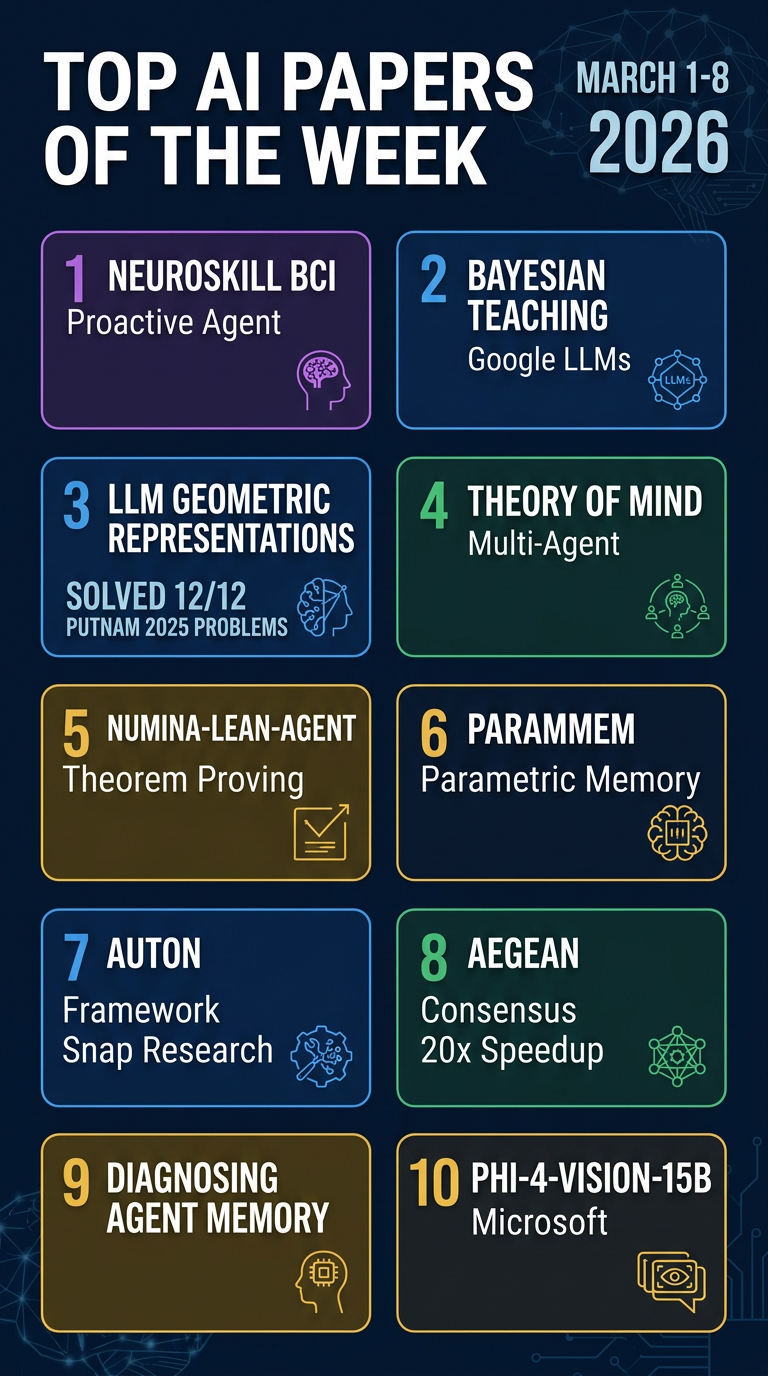

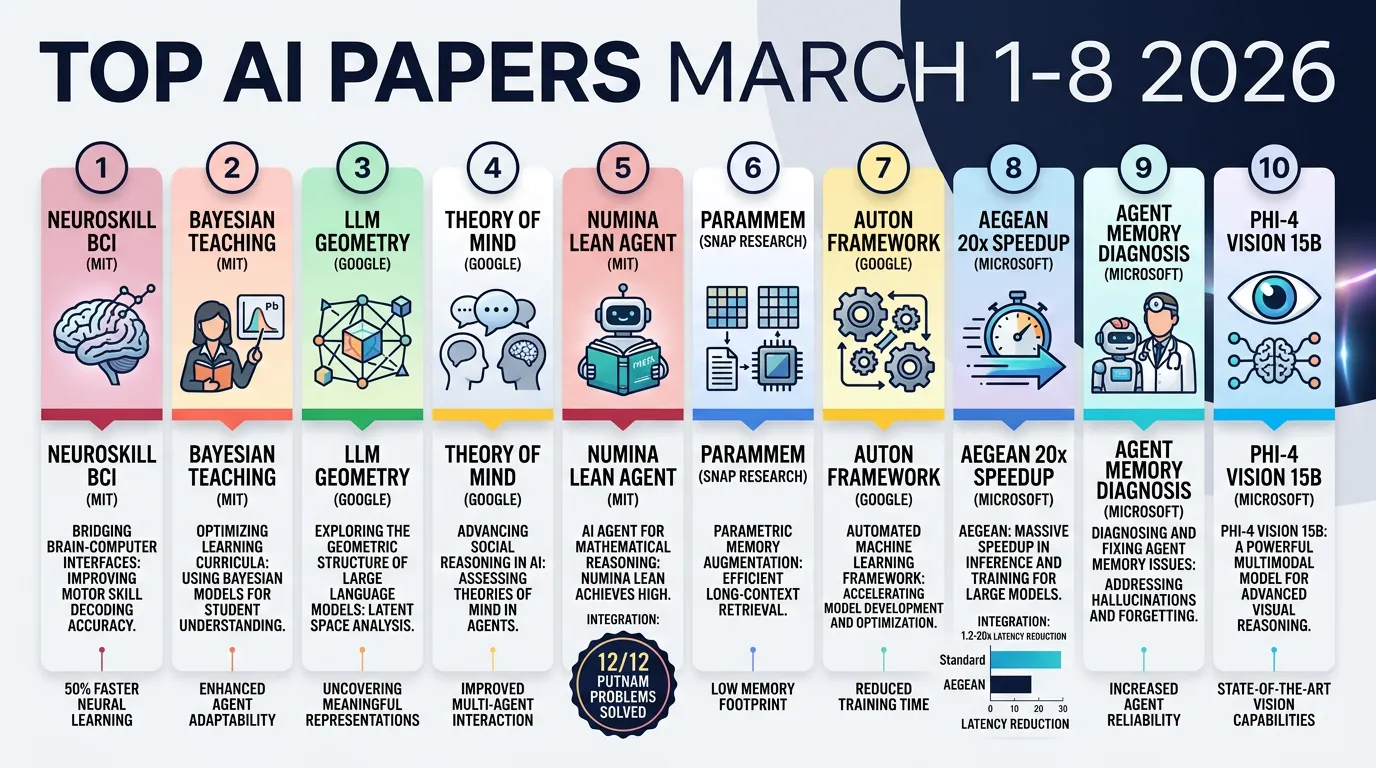

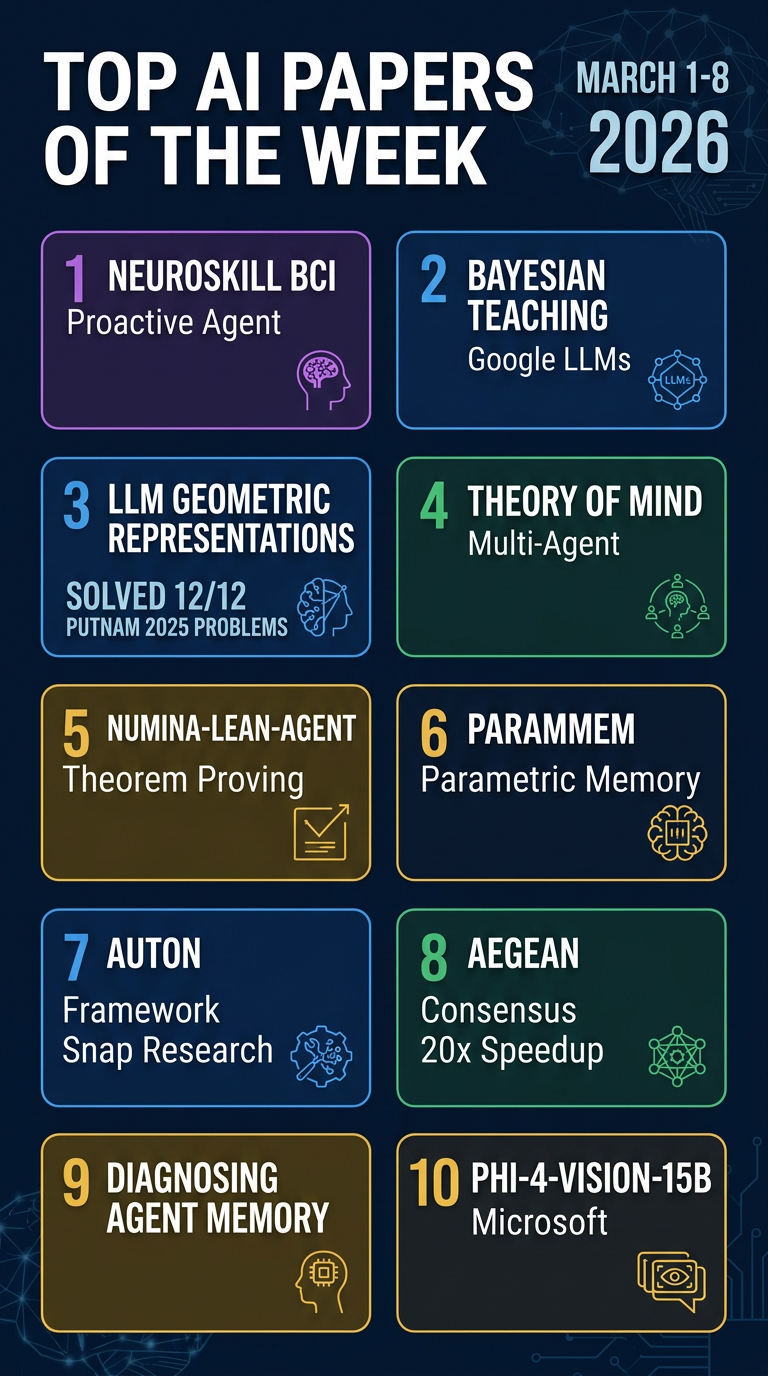

🥇Top AI Papers of the Week (March 1 - March 8)

🥇Top AI Papers of the Week (March 1 - March 8)

Source: https://nlp.elvissaravia.com/p/top-ai-papers-of-the-week-8c6

Author: Elvis Saravia (AI Newsletter)

Date Processed: 2026-03-09

Summary

Elvis Saravia’s weekly roundup of top AI research for March 1–8, 2026 covers 10 significant papers spanning proactive agentic systems, probabilistic reasoning, multi-agent coordination, formal theorem proving, and memory in LLM agents. A free, fully accessible article.

Main Themes

-

Proactive & Embodied AI Agents: Systems that react to biological signals rather than waiting for explicit commands

-

Reasoning Quality: Teaching LLMs Bayesian inference; understanding why geometric structures emerge in representations

-

Multi-Agent Coordination: Theory of Mind, consensus protocols, memory diagnosis

-

Formal Methods + Agents: General coding agents as automated theorem provers

-

Memory & Reflection: Parametric memory for diverse self-reflection; retrieval as the bottleneck

Papers

1. NeuroSkill

Paper: https://arxiv.org/abs/2603.03212

MIT researchers introduce a real-time proactive agentic system that integrates Brain-Computer Interface (BCI) signals with foundation EXG models and text embeddings to model human cognitive and emotional state. Unlike reactive agents, NeuroSkill operates proactively — interpreting biophysical/neural signals to anticipate user needs before they ask.

-

NeuroLoop: Custom agentic flow that processes BCI signals through a foundation EXG model, converts them to state-of-mind descriptions, and drives tool calls

-

Fully offline edge deployment: Runs locally on edge devices with no network dependency — key for privacy and real-time latency

-

Proactive vs. reactive: Detects confusion, cognitive overload, or emotional shifts and adjusts before the user explicitly asks

-

Open-source: Released under GPLv3 with AI100 ethical licensing framework

2. Bayesian Teaching for LLMs

Paper: https://arxiv.org/abs/2503.17523

Google researchers fine-tune LLMs on synthetic interactions with a Bayesian Assistant that represents optimal probabilistic inference. LLMs normally fail normative Bayesian reasoning (base rate neglect, conservatism), but this training dramatically improves belief updating from new evidence.

-

Bayesian Assistant as teacher: Synthetic training data from idealized probabilistic interactions

-

Generalizes to new tasks: Transfers Bayesian reasoning to task types unseen during training

-

Closes the gap: Substantially reduces systematic deviations from normative Bayesian predictions

-

Data quality > model scale: Smaller models trained on Bayesian interactions outperform larger models reasoning from scratch

3. Why LLMs Form Geometric Representations

Paper: https://arxiv.org/abs/2602.15029

LLMs spontaneously form striking geometric structures in internal representations — months organize into circles, historical years form spirals, spatial coordinates align to recoverable manifolds. This paper proves these emerge directly from translation symmetries in natural language statistics, not deep learning dynamics.

-

Translation symmetry as root cause: Co-occurrence frequency between months depends only on the time interval, proving circular geometry emerges as optimal encoding

-

Analytical derivation: Derives exact manifold geometry from data statistics rather than just observing post-hoc

-

Spirals for continuums: Continuous concepts like historical years form compact 1D manifolds with characteristic extrinsic curvature

-

Universal mechanism: Robust across different architectures — geometry emerges whenever co-occurrence statistics are controlled by an underlying latent variable

4. Theory of Mind in Multi-Agent LLMs

Paper: https://arxiv.org/abs/2603.00142

Multi-agent architecture combining Theory of Mind (ToM), Belief-Desire-Intention (BDI) models, and symbolic solvers for logical verification, evaluated on resource allocation problems. Counterintuitive finding: simply adding cognitive mechanisms does not automatically improve coordination.

-

Integrated cognitive architecture: ToM + BDI + symbolic solvers layer human-like reasoning

-

Model capability matters more: Stronger models benefit from ToM; weaker models are confused by the reasoning overhead

-

Symbolic verification as stabilizer: Grounds agent decisions in formal constraints

-

Practical implication: Match cognitive complexity to model capability — ToM in underpowered models hurts

5. Numina-Lean-Agent

Paper: https://arxiv.org/abs/2601.14027

Paradigm shift in automated theorem proving: use a general coding agent (Claude Code + Numina-Lean-MCP) instead of complex specialized systems. The agent autonomously interacts with the Lean proof assistant while accessing theorem libraries.

-

General agent over specialized provers: Performance improves simply by upgrading the base model — no expensive retraining

-

MCP-powered tool integration: Lean-LSP-MCP for proof assistant interaction, LeanDex for semantic theorem retrieval, informal prover for proof strategies

-

State-of-the-art: Using Claude Opus 4.5, solves all 12/12 Putnam 2025 problems, matching best closed-source systems

-

Open-source: Full system + solutions released on GitHub under Creative Commons BY 4.0

6. ParamMem

Paper: https://arxiv.org/abs/2602.23320

Self-reflection in LLM agents tends to produce repetitive reflections that add noise. ParamMem introduces a parametric memory module encoding cross-sample reflection patterns into model parameters, enabling diverse reflection via temperature-controlled sampling.

-

Diversity correlates with success: Strong positive correlation between reflective diversity and task success

-

Three-tier memory architecture: Parametric memory (cross-sample patterns) + episodic memory (individual instances) + cross-sample memory (global learning patterns)

-

Weak-to-strong transfer: Reflection patterns learned by smaller models can be applied to larger ones

-

Consistent benchmark gains: Outperforms SOTA baselines on code generation, mathematical reasoning, and multi-hop QA

7. Auton Agentic AI Framework

Paper: https://arxiv.org/abs/2602.23720

Snap Research introduces a declarative architecture for specification, governance, and runtime execution of autonomous agents. Addresses the fundamental mismatch: LLMs produce stochastic outputs, backend infrastructure requires deterministic, schema-conformant inputs.

-

Cognitive Blueprint separation: Strict separation between declarative agent specification and Runtime Engine — enables cross-language portability and formal auditability

-

Formal execution model: Agent execution formalized as an augmented POMDP with latent reasoning space

-

Biologically-inspired memory: Hierarchical memory consolidation inspired by biological episodic memory systems

-

Runtime optimizations: Parallel graph execution, speculative inference, dynamic context pruning; safety via constraint manifold formalism

8. Aegean — Consensus Protocol for Multi-Agent LLMs

Paper: https://arxiv.org/abs/2512.20184

Frames multi-agent refinement as a distributed consensus problem. Instead of static heuristic workflows with fixed loop limits, Aegean enables early termination when sufficient agents converge.

-

1.2–20x latency reduction across four mathematical reasoning benchmarks

-

Maintains answer quality within 2.5% of standard approaches

-

Consensus-aware serving engine performs incremental quorum detection across concurrent agent executions

-

Cuts wasted compute on stragglers

9. Diagnosing Agent Memory

Paper: https://arxiv.org/abs/2603.02473

Diagnostic framework separating retrieval failures from utilization failures in LLM agent memory systems. 3×3 factorial study crossing three write strategies with three retrieval methods.

-

Retrieval is the dominant bottleneck: Accounts for 11–46% of errors

-

Utilization failures stable: 4–8% regardless of configuration

-

Hybrid reranking cuts retrieval failures roughly in half — larger gains than any write strategy optimization

-

Actionable guidance: focus optimization effort on retrieval, not writing

10. Phi-4-reasoning-vision-15B

Paper: https://arxiv.org/abs/2603.03975

Microsoft presents a compact open-weight multimodal reasoning model combining visual understanding with structured reasoning. Trained on just 200 billion tokens of multimodal data.

-

Excels at math and science reasoning and UI comprehension

-

Requires significantly less compute than comparable open-weight VLMs

-

Key insight: systematic filtering, error correction, and synthetic augmentation are the primary levers for performance

-

Pushes the Pareto frontier of accuracy–compute tradeoff

Key Takeaways

-

Proactive AI is coming: NeuroSkill shows agents can anticipate needs via biological signals — not just text

-

Data quality > scale: Bayesian Teaching and Phi-4 both reinforce that curated training data unlocks capabilities scale alone cannot

-

Geometry is fundamental: LLMs don’t just learn facts — they learn structure. Circles, spirals, and manifolds emerge from statistical regularities

-

General agents beat specialized systems: Numina-Lean-Agent solving all 12 Putnam problems with Claude Code is a landmark result

-

Memory diagnosis matters: The real enemy in agent memory is retrieval, not utilization — fix retrieval first

-

Consensus saves compute: Aegean’s 20x speedup shows distributed systems thinking has direct payoffs for LLM agent efficiency

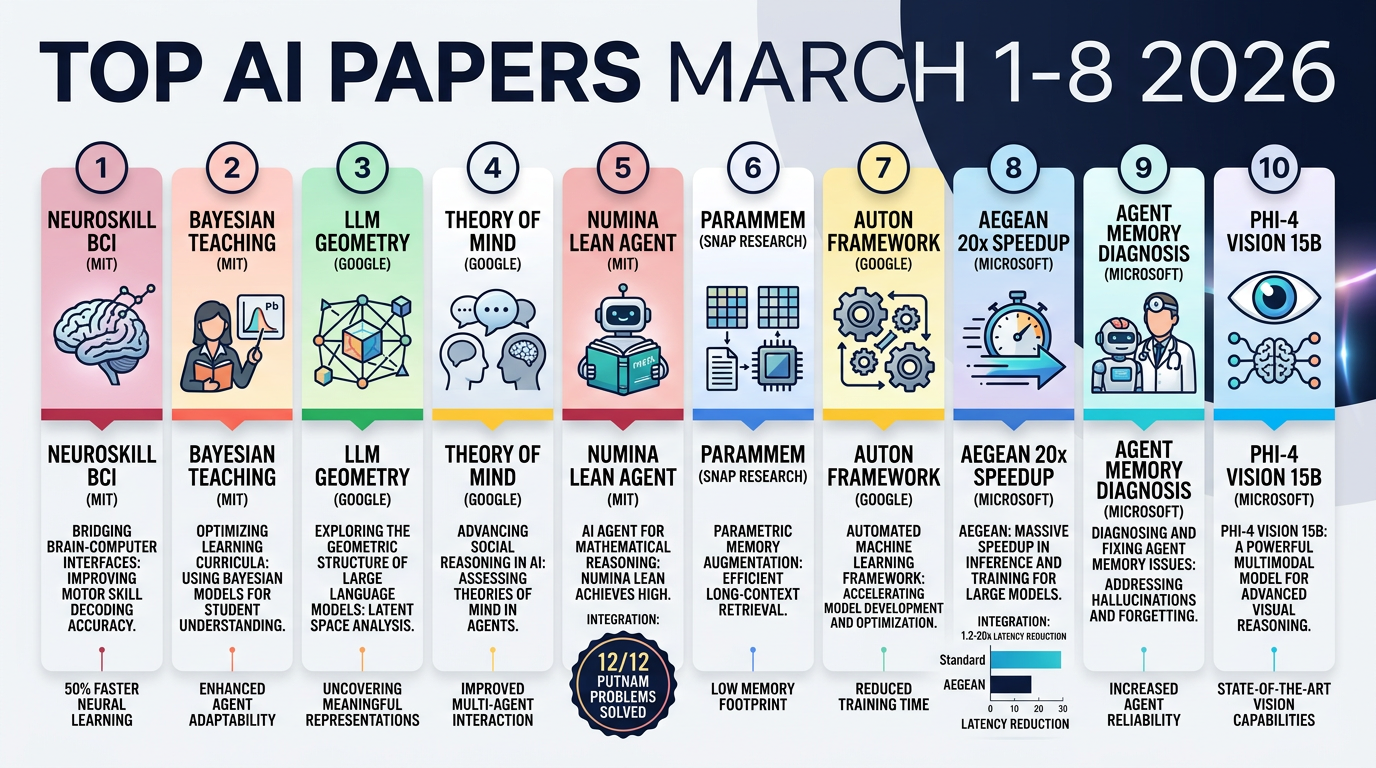

Infographics

Portrait (9:16)

Landscape (16:9)

#ai-newsletter #ai-papers #research #agents #reasoning #memory #multimodal

Infographics