AI Agents Weekly - GPT-5.3 Codex Spark

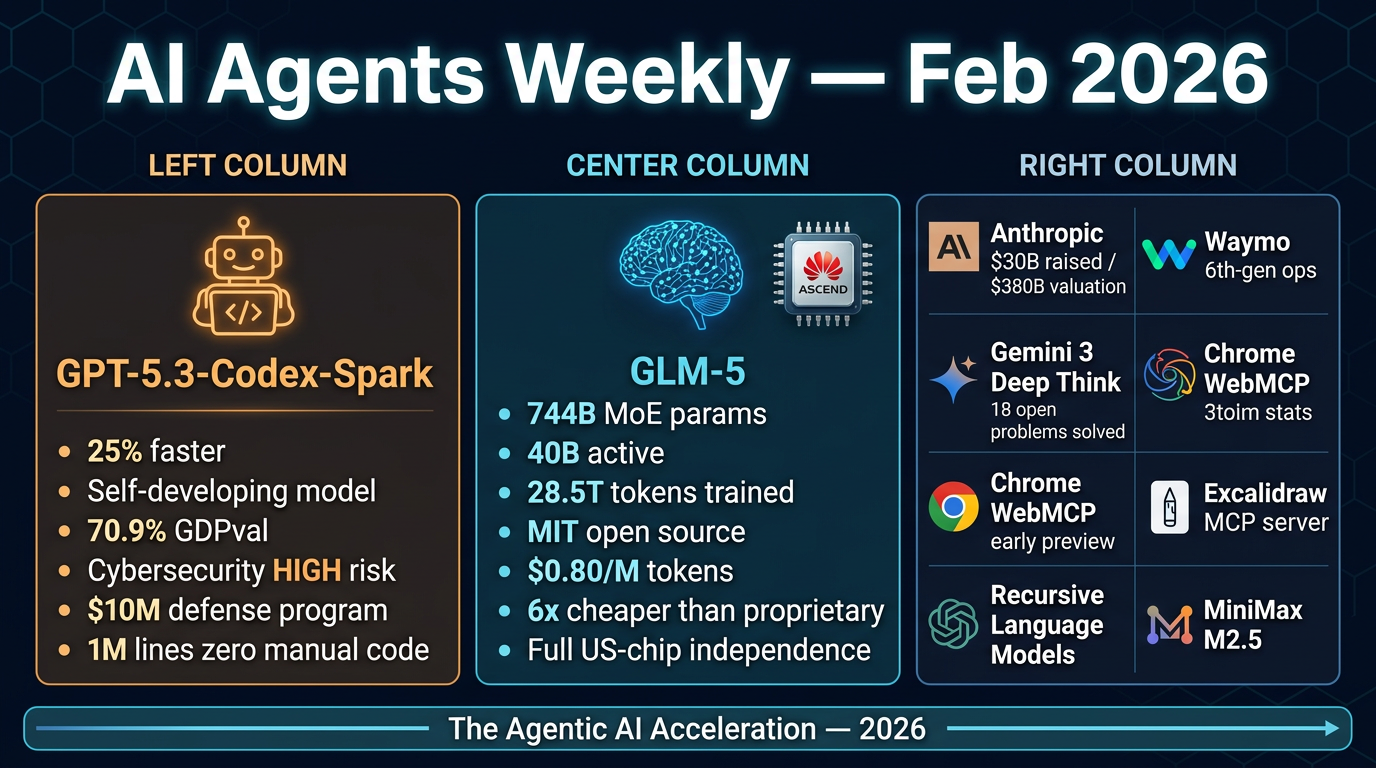

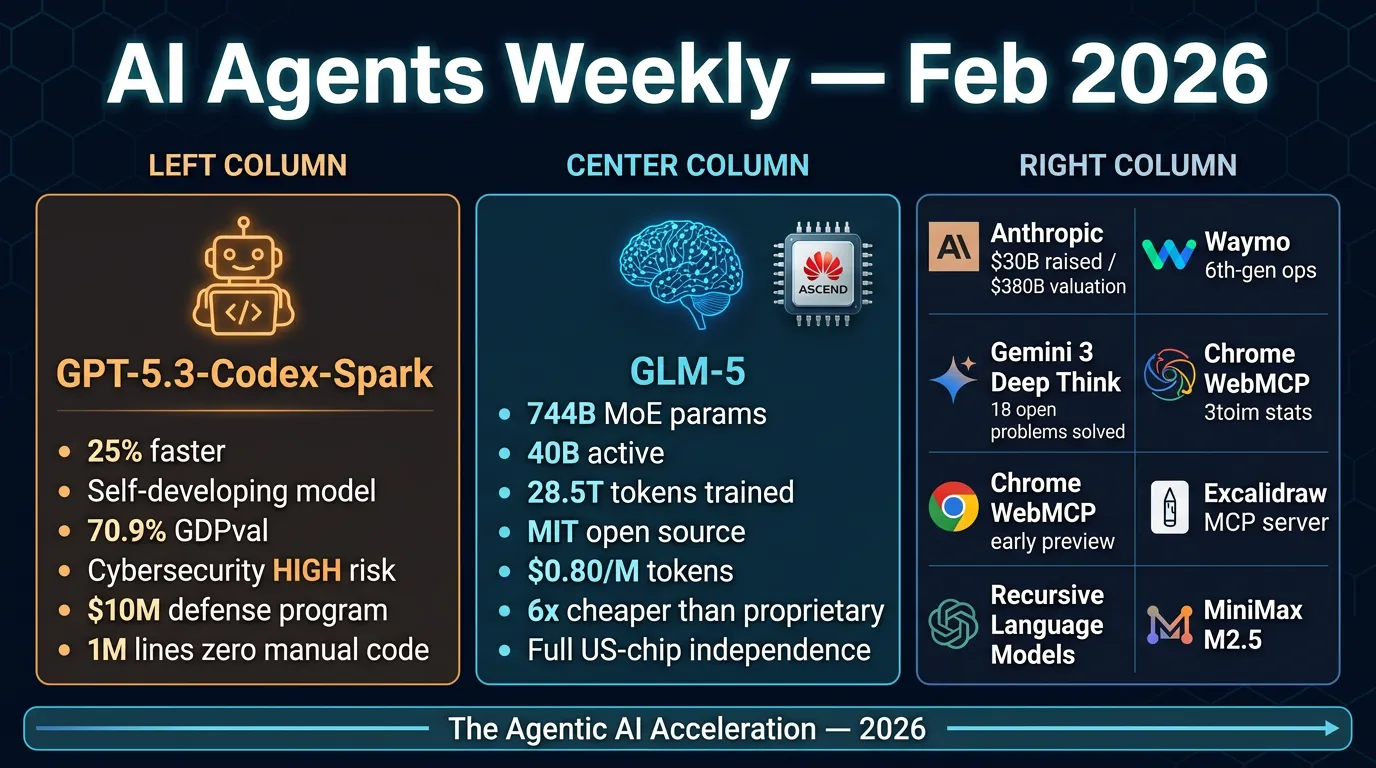

AI Agents Weekly: GPT-5.3-Codex-Spark, GLM-5, MiniMax M2.5 & More

From Elvis Saravia’s AI Newsletter — February 14, 2026

Main Thesis

This edition covers a wave of major AI model releases and agentic AI developments, highlighting rapid progress in autonomous coding agents, open-source frontier models, and the expanding capabilities — and risks — of next-generation AI systems.

Key Stories (Accessible Content)

🔥 GPT-5.3-Codex-Spark (OpenAI)

- OpenAI’s most capable agentic coding model to date, running 25% faster than its predecessor.

- Self-developing milestone: Used early GPT-5.3 versions to debug its own training, manage deployment, and diagnose evaluations — making it the first OpenAI model instrumental in its own creation.

- Beyond coding: Handles professional knowledge-work outputs including presentations, spreadsheets, and documentation. Wins or ties 70.9% of evaluations on the GDPval knowledge-work benchmark.

- Cybersecurity flag: Rated as OpenAI’s first model hitting “high” cybersecurity capability under their Preparedness Framework — meaning it could meaningfully enable real-world cyber harm if misused. OpenAI announced a $10M API credits program for cyber defense research in response.

- Notably, OpenAI reportedly shipped 1M lines of code with zero manual code using this model.

🧠 GLM-5 (Zhipu AI)

- A 744B-parameter Mixture-of-Experts (MoE) model with 40B active parameters, built specifically for agentic intelligence and multi-step reasoning.

- Hardware independence: Trained entirely on Huawei Ascend chips using the MindSpore framework — representing full independence from US-manufactured semiconductors.

- Agent Mode: Native autonomous task decomposition — breaks high-level objectives into subtasks with minimal human intervention. Can convert raw prompts into professional

.docx,.pdf, and.xlsxdocuments. - Training scale: Pre-trained on 28.5 trillion tokens (a 23.9% increase over GLM-4.7), using a novel RL technique achieving record-low hallucination rates.

- Open source & affordable: Released under MIT license with open weights. Available on OpenRouter at ~$0.80/M input tokens and ~$2.56/M output tokens — roughly 6x cheaper than comparable proprietary models.

- Competitive with frontier models across coding, creative writing, and complex problem-solving.

Additional Headlines (Paywalled — Titles Only)

- MiniMax M2.5 — New open-source model drop

- Recursive Language Models — Replacing context stuffing

- Agentica — Pushing ARC-AGI-2 with recursive agents

- Chrome WebMCP — Early preview launched

- Anthropic — Raises $30B at $380B valuation

- Excalidraw — Launches official MCP server

- Hive agent framework — Evolves at runtime

- Waymo — Begins 6th-gen autonomous operations

- Gemini 3 Deep Think — Solves 18 open mathematical/scientific problems

Practical Takeaways

- Agentic coding is maturing fast — GPT-5.3-Codex-Spark represents a new class of self-improving models that can participate in their own development cycle.

- Open-source is competitive — GLM-5 shows that MIT-licensed, open-weight models at frontier scale are now viable and dramatically cheaper than proprietary alternatives.

- Hardware geopolitics matter — GLM-5’s full Huawei/Ascend stack signals a credible alternative AI hardware ecosystem independent of NVIDIA/US chips.

- Cybersecurity risks are escalating — OpenAI’s own Preparedness Framework flagging a “high” risk level is a serious signal; developers should monitor AI safety guidelines closely.

- MCP (Model Context Protocol) is expanding — Both Chrome and Excalidraw launching MCP integrations suggests the protocol is becoming infrastructure-level for agentic AI.

Note: No arXiv papers were linked or cited in the accessible portion of this article.