AI Agents Weekly: Evaluating Agents

AI Agents Weekly: Evaluating AGENTS.md & More

From Elvis Saravia’s AI Newsletter — February 28, 2026

Main Thesis

This issue covers a wide range of AI agent developments, with the headline story challenging a widely adopted practice: using repository-level context files (like AGENTS.md or CLAUDE.md) to guide coding agents. Counterintuitively, research shows these files may be doing more harm than good.

🔬 Key Finding: AGENTS.md Files Hurt Coding Agent Performance

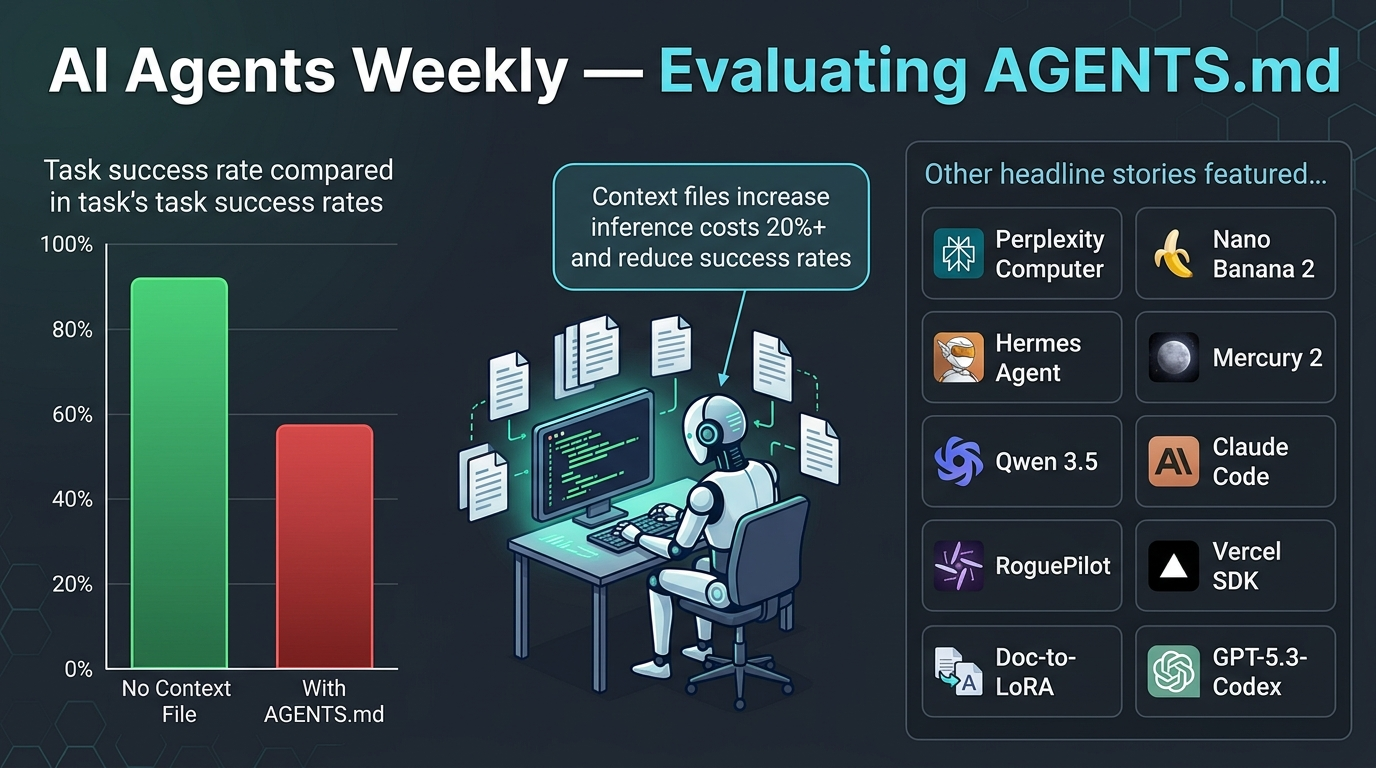

Researchers from UIUC and Microsoft Research evaluated whether repository-level context files actually improve coding agent performance on SWE-bench benchmarks.

Surprising results:

- ❌ Lower success rates — Both LLM-generated and human-written context files caused agents to solve fewer tasks compared to agents given no repository context at all.

- 💸 Higher inference costs — Context files increased inference costs by over 20%.

- 🔍 Broader but less effective exploration — Agents with context files explored more (more testing, more file traversal), but the additional constraints made tasks harder, not easier.

- ✅ Minimal is better — The authors recommend context files describe only minimal requirements rather than comprehensive specifications, as unnecessary constraints actively hurt performance.

Practical takeaway: Developers should rethink how they write AGENTS.md, CLAUDE.md, and similar files — focus on essential guardrails only, not exhaustive instructions.

📰 Other Stories Covered (Paywalled)

| Story | Summary |

|---|---|

| Perplexity Computer | Perplexity launches a computer-use agent for end-to-end task automation |

| Google Nano Banana 2 | Google releases Nano Banana 2 model for free |

| Sakana AI Doc-to-LoRA & Text-to-LoRA | Tools for fine-tuning models directly from documents or text |

| Notion Custom Agents 3.3 | Notion launches custom agent capabilities in version 3.3 |

| Nous Research Hermes Agent | Open-source agent model released by Nous Research |

| GPT-5.3-Codex | OpenAI makes GPT-5.3-Codex available to all developers |

| Mercury 2 | New reasoning diffusion LLM ships from Mercury |

| Qwen 3.5 Medium Series | Alibaba drops a new medium-sized Qwen model series |

| Claude Code Auto-Memory | Anthropic ships auto-memory across sessions for Claude Code |

| RoguePilot | Security vulnerability exposed in GitHub Copilot |

| Vercel Chat SDK | Vercel open-sources a Chat SDK for multi-platform bot development |

💡 Practical Takeaways

- Less is more when writing agent context files — avoid over-specifying agent behaviour.

- Benchmark your context files — don’t assume that more instructions equals better agent performance.

- The AI tooling ecosystem is rapidly expanding across coding, browser automation, fine-tuning, and memory management.

- Security remains a concern as tools like RoguePilot highlight vulnerabilities in popular AI coding assistants.