AI Agents Weekly - Claude Sonnet

AI Agents Weekly: Claude Sonnet 4.6, Gemini 3.1 Pro, Stripe Minions & More

From Elvis Saravia’s AI Newsletter — February 21, 2026

Overview

This issue covers a packed week of AI agent developments, spanning major model releases, infrastructure tooling, agentic benchmarks, and real-world agent deployments.

🔥 Top Stories (Publicly Accessible)

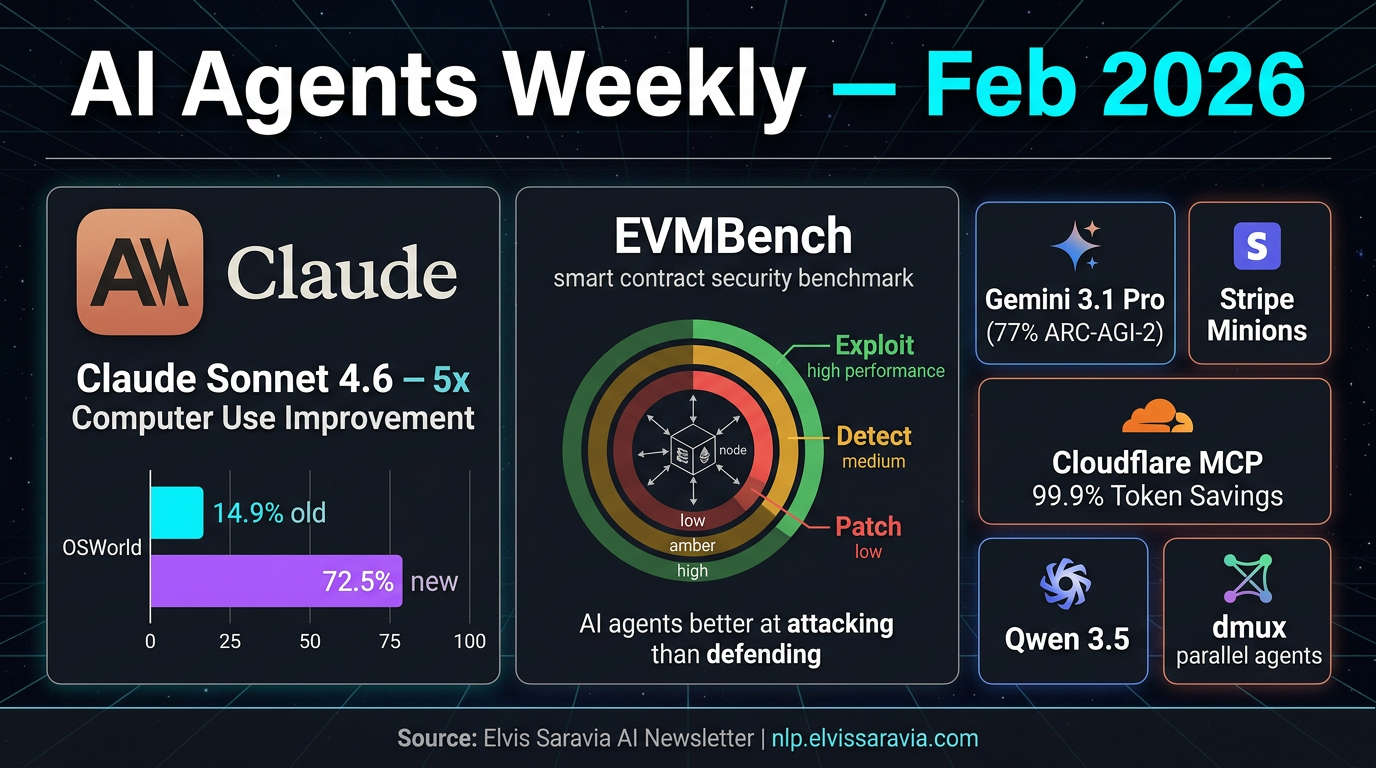

1. Claude Sonnet 4.6 — Anthropic

Anthropic released Claude Sonnet 4.6 as the new default model for all Claude users on February 17, 2026.

Key highlights:

- Computer Use Breakthrough: OSWorld scores jumped from 14.9% → 72.5% — a nearly 5x improvement — making it the most capable model for autonomous GUI-based agent workflows.

- 1M Token Context Window: Available in beta, enabling agents to process entire codebases, long documents, and multi-session histories without losing earlier context.

- User Preference: In blind A/B tests, users preferred Sonnet 4.6 over Sonnet 4.5 roughly 70% of the time, especially in coding, instruction following, and nuanced reasoning.

- Pricing: $3/$15 per million input/output tokens — cost-efficient for high-volume agentic deployments.

Practical Takeaway: Sonnet 4.6 is positioned as the go-to model for autonomous agent workflows, particularly those involving computer use, long-context reasoning, and code generation.

2. EVMBench — AI Agents vs. Smart Contract Security

OpenAI and Paradigm introduced EVMBench, a benchmark evaluating AI agents on smart contract security tasks across 120 curated vulnerabilities from 40 audits.

Key findings:

- Exploit tasks are handled best — agents perform well when the goal is explicit (e.g., drain funds iteratively).

- Detect and Patch tasks are harder — agents struggle with exhaustive auditing and maintaining full contract functionality after patching.

- Detection Gap: Agents tend to stop after finding a single vulnerability rather than performing comprehensive audits — a critical limitation for security-critical deployments.

- Scenarios sourced from open code audit competitions and Tempo blockchain (a purpose-built L1 for high-throughput stablecoin payments).

Practical Takeaway: AI agents show promise in offensive security (exploit generation) but are not yet reliable enough for defensive, exhaustive smart contract auditing without human oversight.

📰 Paywalled Headlines (Titles Only)

The following stories are mentioned but locked behind the paid subscription:

- Gemini 3.1 Pro — Google launches with 77% ARC-AGI-2 score

- Stripe Minions — Coding agents shipped at scale

- Cloudflare Code Mode MCP — 99.9% token savings reported

- Qwen 3.5 — Alibaba drops new model with agentic vision capabilities

- ggml.ai joins Hugging Face — Local AI inference collaboration

- Anthropic measures AI agent autonomy in practice

- AI agent autonomously publishes a hit piece — Autonomous content generation controversy

- dmux — Multiplexes AI coding agents in parallel

- New benchmarks for agent memory and reliability

📄 Papers Mentioned

No direct arxiv.org links were included in the accessible portion of the article. EVMBench is referenced via a blog post (no arxiv link provided in the visible content).

🧠 Key Takeaways

- Claude Sonnet 4.6 represents a step-change in computer use capability — the 5x OSWorld improvement is significant for production agent deployments.

- EVMBench highlights that AI agents are better attackers than defenders in smart contract security — important for teams considering AI-assisted auditing.

- The week broadly signals a maturing agentic infrastructure layer — from MCP tooling (Cloudflare) to parallel agent orchestration (dmux) to memory benchmarking.

- Cost-efficient, long-context models like Sonnet 4.6 are making large-scale multi-agent systems increasingly viable.