🤖 AI Agents Weekly: Claude Code Review, AutoHarness, Perplexity Personal Computer, Cloudflare /crawl, Context7 CLI…

Original article: https://nlp.elvissaravia.com/p/ai-agents-weekly-claude-code-review

Processed: March 15, 2026 | Source: Elvis Saravia — AI Newsletter

⚠️ Note: The full article is paywalled. This summary is based on the free preview and table of contents.

Summary

This week’s AI Agents Weekly covers a broad range of agent tooling, infrastructure, and research developments across the industry.

In this issue:

- Claude ships multi-agent Code Review

- AutoHarness makes small agents beat large ones

- Perplexity launches an always-on Personal Computer

- Cloudflare ships a one-call /crawl endpoint

- Context7 CLI brings docs to any agent

- Andrew Ng launches Context Hub

- Cursor Marketplace adds 30+ plugins

- OpenAI shares Skills for Agents SDK

- Google launches Gemini Embedding 2

- Meta ships four MTIA chips in two years

- Codex agent files taxes, catches $20K error

Top Stories

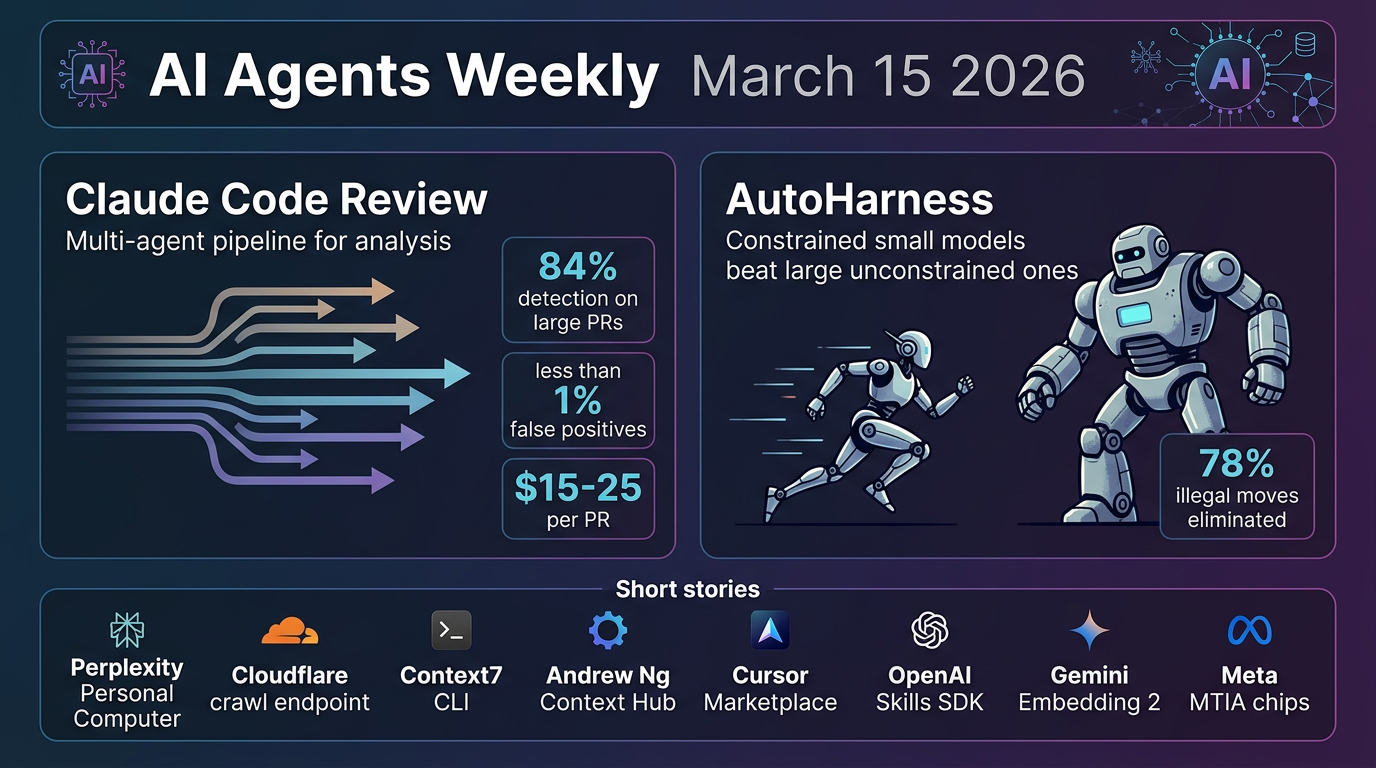

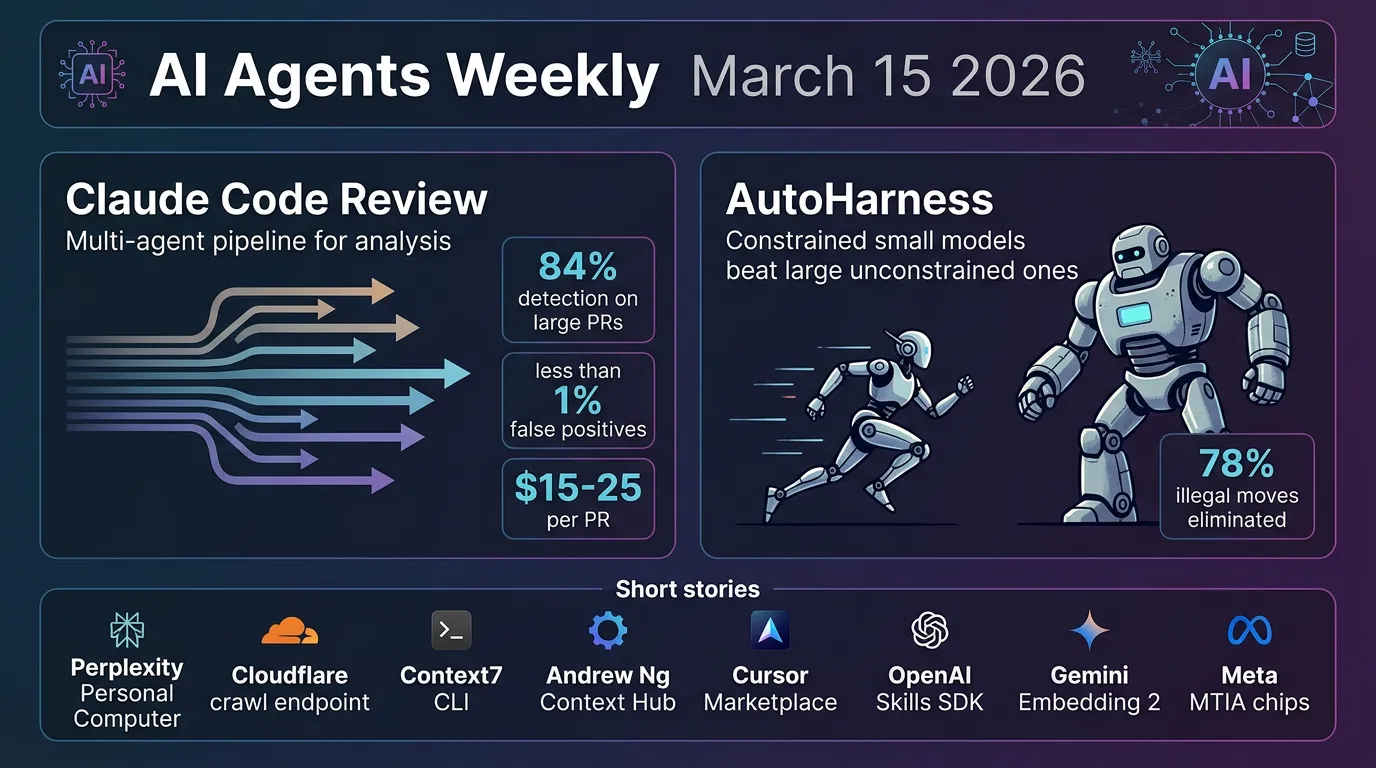

Claude Code Review

Anthropic launched Code Review for Claude Code — an automated system that dispatches multiple AI agents to examine every pull request. Instead of a single pass, parallel agents identify potential issues, verify findings to eliminate false positives, and rank bugs by severity, delivering a consolidated overview comment plus targeted inline annotations.

Key details:

- Multi-agent architecture: The system operates in parallel — agents scan, verify, and prioritize issues independently, producing both a summary comment and inline code annotations for specific problems.

- Scales with complexity: Review depth adjusts based on PR size. Large PRs (over 1,000 lines) received findings 84% of the time, averaging 7.5 issues per PR. Small PRs (under 50 lines) had findings 31% of the time.

- High precision: Less than 1% of flagged issues were marked incorrect by Anthropic engineers, with the system catching production-critical bugs that appeared routine in diffs.

- Pricing and access: Available now as a research preview for Team and Enterprise customers. Reviews average $15–25 per PR, billed on token usage, with configurable monthly caps and per-repo controls.

📎 Blog

AutoHarness: Automated Agent Constraint Synthesis

Researchers introduced AutoHarness, a technique that lets LLMs automatically synthesize protective code harnesses around themselves, preventing illegal actions without human-written constraints. Instead of relying on larger, more expensive models, the approach uses iterative code refinement with environmental feedback to generate custom safeguards — making smaller models outperform bigger unconstrained ones.

Key details:

- Massive illegal action problem: In a recent LLM chess competition, 78% of Gemini-2.5-Flash losses were attributed to illegal moves. AutoHarness eliminates this class of failure entirely by generating harnesses that enforce valid actions across 145 different TextArena games.

- Small beats large: Gemini-2.5-Flash with a synthesized harness exceeded Gemini-2.5-Pro’s performance while reducing costs — demonstrating that proper constraints are more valuable than raw model scale for agent environments.

- Zero-shot generalization: The technique extends beyond game-playing to generating full policies in code, eliminating runtime LLM decision-making entirely and achieving higher rewards than GPT-5.2-High on certain benchmarks.

- Practical agent pattern: The core insight applies broadly to any agent deployment — rather than trusting a model to self-constrain, auto-generate a verified harness that makes illegal states unreachable, shifting safety from model behavior to environment design.

📎 Paper

More This Week (Preview — Paywalled)

- Perplexity Personal Computer — always-on AI computer product

- Cloudflare /crawl — one-call endpoint for web crawling

- Context7 CLI — brings documentation to any agent

- Andrew Ng Context Hub — new platform from the AI pioneer

- Cursor Marketplace — 30+ new plugins for the AI editor

- OpenAI Skills for Agents SDK — new SDK capabilities

- Google Gemini Embedding 2 — next-gen embedding model

- Meta MTIA chips — four chips shipped in two years

- Codex filing taxes — agent catches a $20K error

Key Takeaways

- Multi-agent verification is production-ready — Claude Code Review demonstrates that parallel agent pipelines with cross-verification can achieve less than 1% false positive rates at scale, making agentic code review commercially viable.

- Constraints beat raw capability — AutoHarness shows that wrapping smaller models with auto-generated behavioral harnesses can outperform larger unconstrained models, suggesting the future of reliable agents is in environment design, not just model scaling.

- Agent infrastructure is maturing rapidly — Cloudflare /crawl, Context7 CLI, OpenAI Skills SDK, and Cursor Marketplace all signal a converging ecosystem of primitives designed specifically for agentic workflows.

- Custom silicon is accelerating — Meta shipping four MTIA chips in two years reflects the industry-wide push to build dedicated inference hardware for AI workloads.

Infographics