AI Agents of the Week: Papers You Should Know About (March 22, 2026)

Original article: AI Agents of the Week: Papers You Should Know About — LLM Watch by Pascal Biese, March 22, 2026

Summary

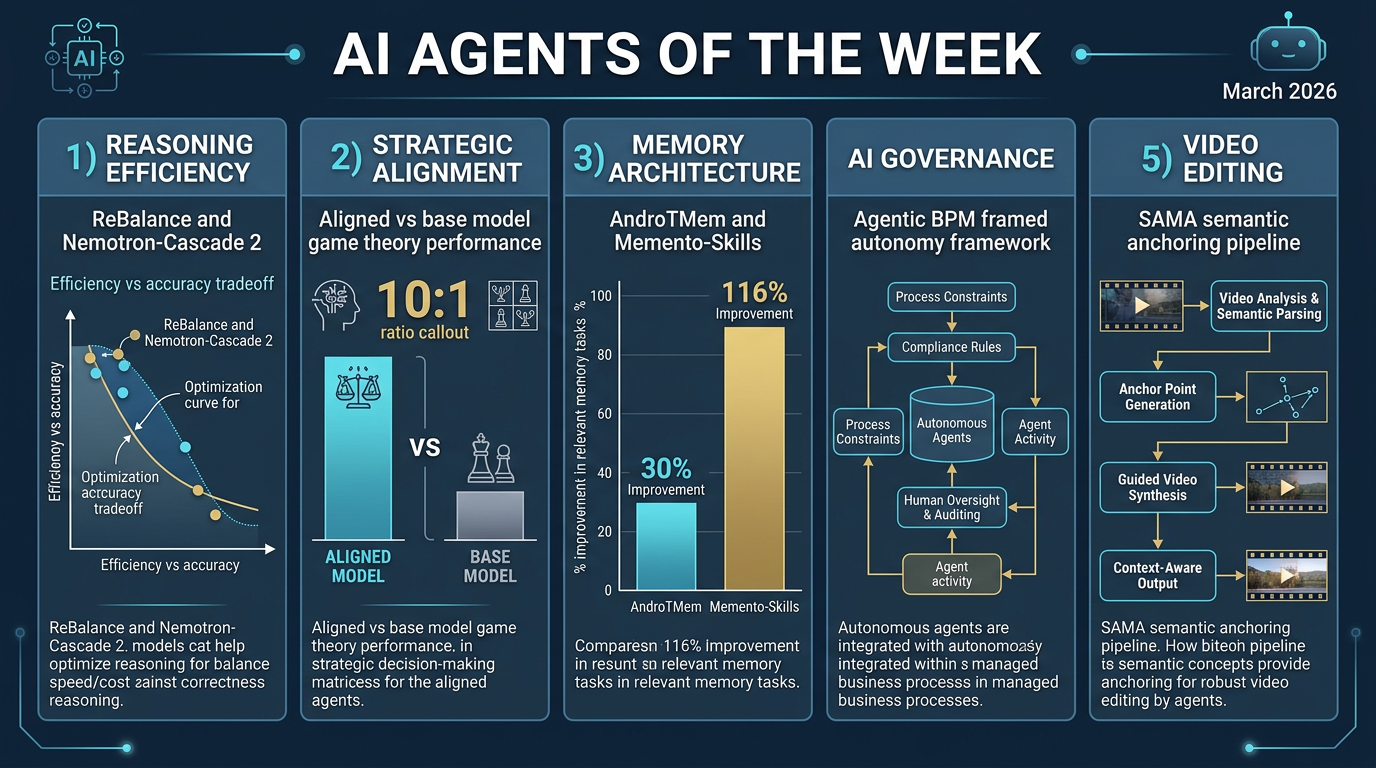

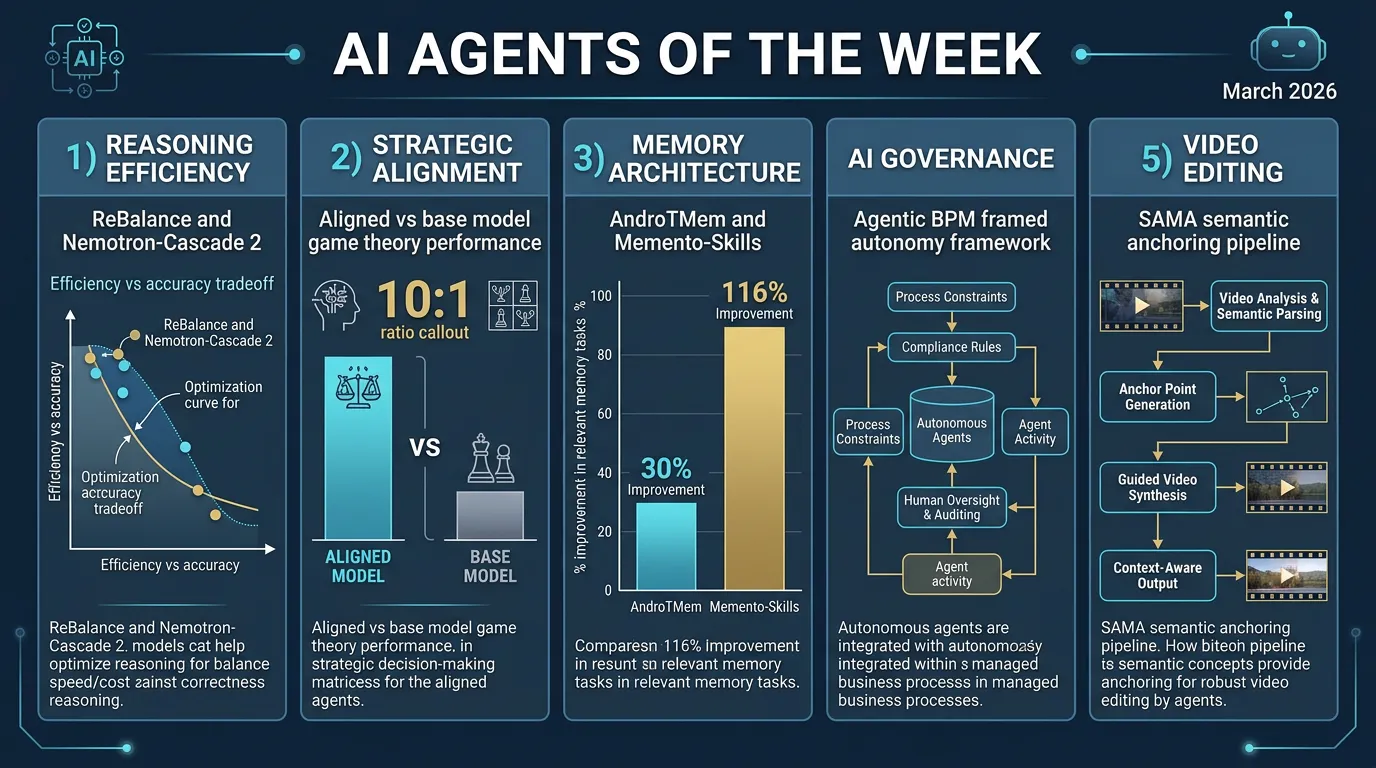

This week’s LLM Watch rounds up five standout research papers at the frontier of AI agents, covering reasoning efficiency, strategic alignment, memory architecture, organizational governance, and video editing. Here’s a detailed breakdown:

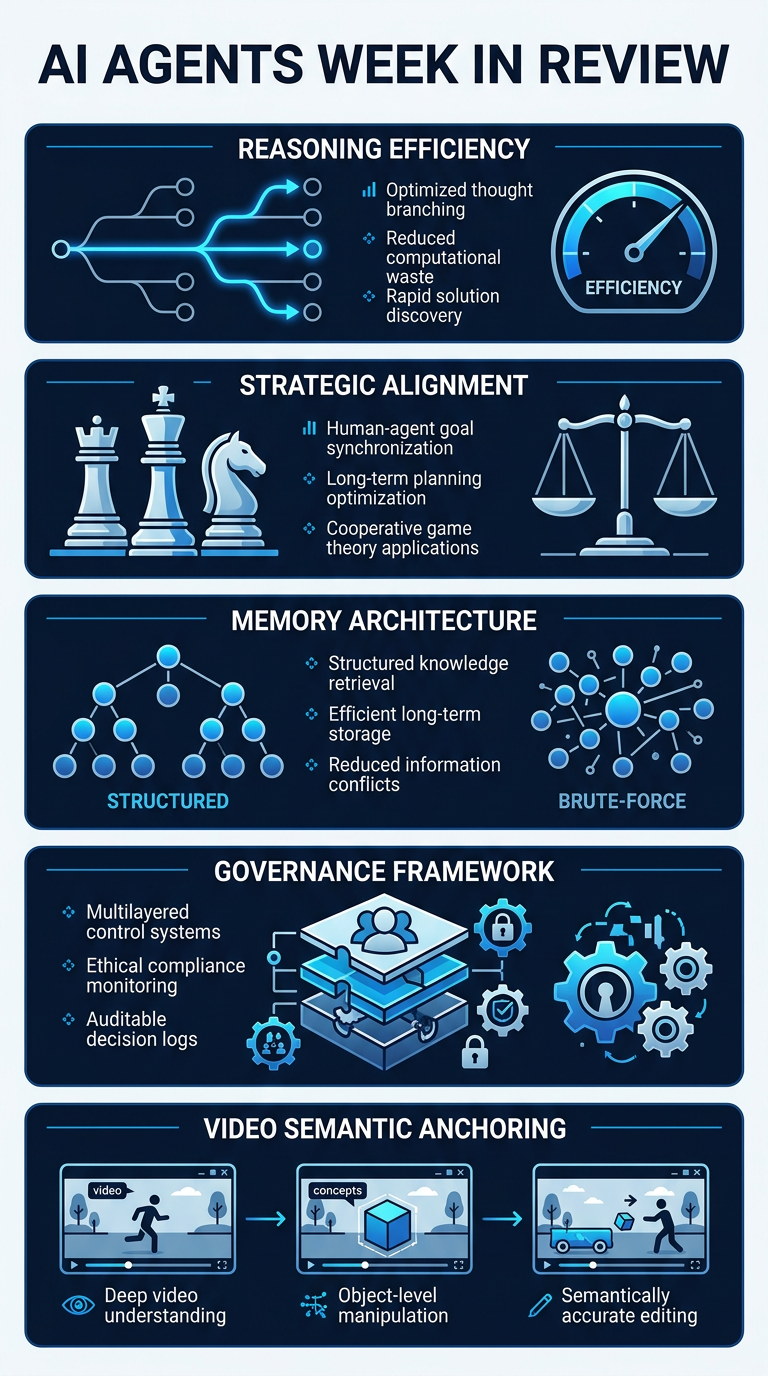

1. Reasoning Efficiency and Balanced Thinking

Large Reasoning Models (LRMs) are powerful but notoriously wasteful—they overthink simple problems and underthink hard ones. Two papers tackle this from opposite ends:

- ReBalance (arxiv.org/abs/2603.12372): A training-free framework that uses confidence-based steering vectors to dynamically prune redundant reasoning steps or promote deeper exploration in real time. Tested across nine benchmarks and four model sizes (0.5B to 32B), it improves accuracy while reducing output length.

- Nemotron-Cascade 2 (arxiv.org/abs/2603.19220): Demonstrates that intensive post-training via Cascade RL and multi-domain on-policy distillation can achieve gold-medal-level math and coding reasoning in a 30B MoE model with only 3B activated parameters—matching frontier model performance with 20× fewer parameters.

Key tension: Steer the reasoning you have, or distill better reasoning into a smaller model?

2. Strategic Alignment and Game-Theoretic Behavior

A fascinating paradox emerges at the intersection of alignment and multi-agent strategy:

- Alignment Makes Language Models Normative, Not Descriptive (arxiv.org/abs/2603.17218): Aligned models outperform base models on textbook one-shot games, but lose to base models by nearly 10:1 when predicting real human choices in multi-round strategic interactions (bargaining, negotiation, repeated games with reciprocity and retaliation).

- Reasonably Reasoning AI Agents Can Avoid Game-Theoretic Failures (arxiv.org/abs/2603.18563): Off-the-shelf reasoning agents can achieve Nash-like equilibrium play zero-shot—without any post-training alignment.

Practical implication: Alignment helps with normative compliance but may actively hinder realistic strategic behavior in competitive deployments.

3. Memory Architecture for Long-Horizon Agents

How agents remember matters more than how much they remember:

- AndroTMem (arxiv.org/abs/2603.18429): Diagnoses performance degradation in long-horizon GUI tasks as stemming from within-task memory failures. Proposes Anchored State Memory (ASM), improving task completion rates by 5%–30.16% over full-sequence replay.

- Memento-Skills (arxiv.org/abs/2603.18743): Agents build and refine a library of reusable markdown-based skills as externalized memory, achieving 26.2% and 116.2% relative accuracy improvements on the General AI Assistants benchmark and Humanity’s Last Exam, respectively.

Shared lesson: Structured, selective memory outperforms brute-force replay every time.

4. Governance and Organizational Deployment

- Agentic Business Process Management manifesto (arxiv.org/abs/2603.18916): Articulates a paradigm shift from traditional automation-oriented BPM toward systems built on “framed autonomy”—where agents perceive, reason, and act within explicit process frames. Demands explainability, conversational actionability, and self-modification capabilities. This creates a tension with self-improving architectures like Memento-Skills, where autonomous evolution may conflict with organizational control.

5. Instruction-Guided Generation and Semantic Anchoring

- SAMA (arxiv.org/abs/2603.19228): Addresses instruction-guided video editing by factorizing the problem into semantic anchoring and motion alignment. Pre-training on motion-centric restoration tasks yields strong zero-shot editing ability. Achieves state-of-the-art open-source performance competitive with commercial systems like Kling-Omni.

Transferable pattern for agents: Anchor the semantics, then align the dynamics—applicable anywhere agents must plan structural changes while preserving temporal coherence.

Key Takeaways

- Efficiency > scale: Smarter reasoning routing (ReBalance) or distillation (Nemotron-Cascade 2) can match frontier models at a fraction of the cost.

- Alignment trades off realism: Aligned models are better normative agents but worse predictors of real human behavior in strategic settings.

- Memory architecture is underrated: Selective, structured memory (ASM, Memento-Skills) delivers outsized gains over naive replay.

- Governance is the next bottleneck: As agents gain autonomy, organizational control frameworks (Agentic BPM) will define deployment success.

- Decomposition works: SAMA’s anchor-then-align pattern generalizes beyond video—it’s a blueprint for any agent managing change.

Processed by Angus on 2026-03-23 | Source: LLM Watch

Infographics