AI Agents of the Week: Papers You...

AI Agents of the Week – LLM Watch (Feb 22, 2026)

Main Thesis

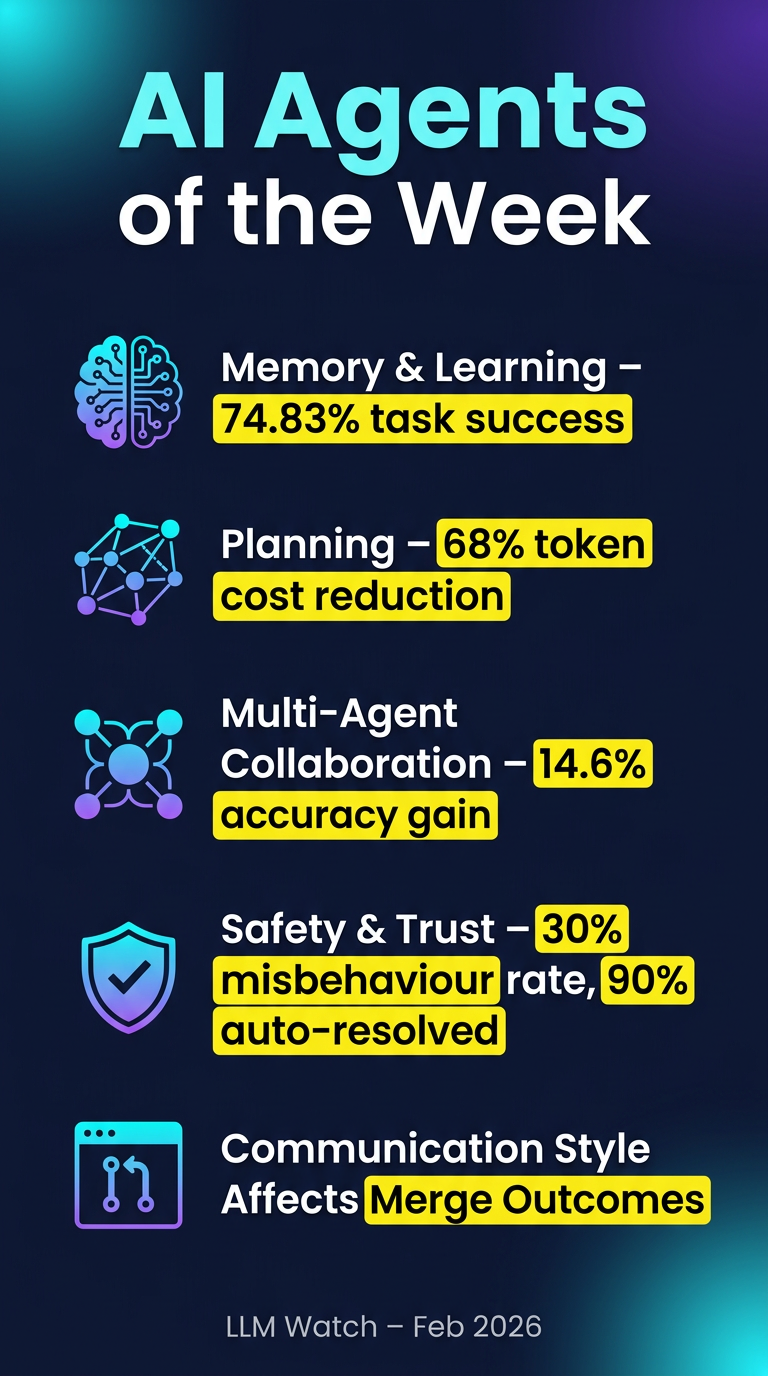

This weekly research roundup from LLM Watch highlights five key areas where AI agent research is rapidly advancing: memory & continual learning, planning under uncertainty, multi-agent collaboration, trust & safety, and practical tooling.

Key Findings

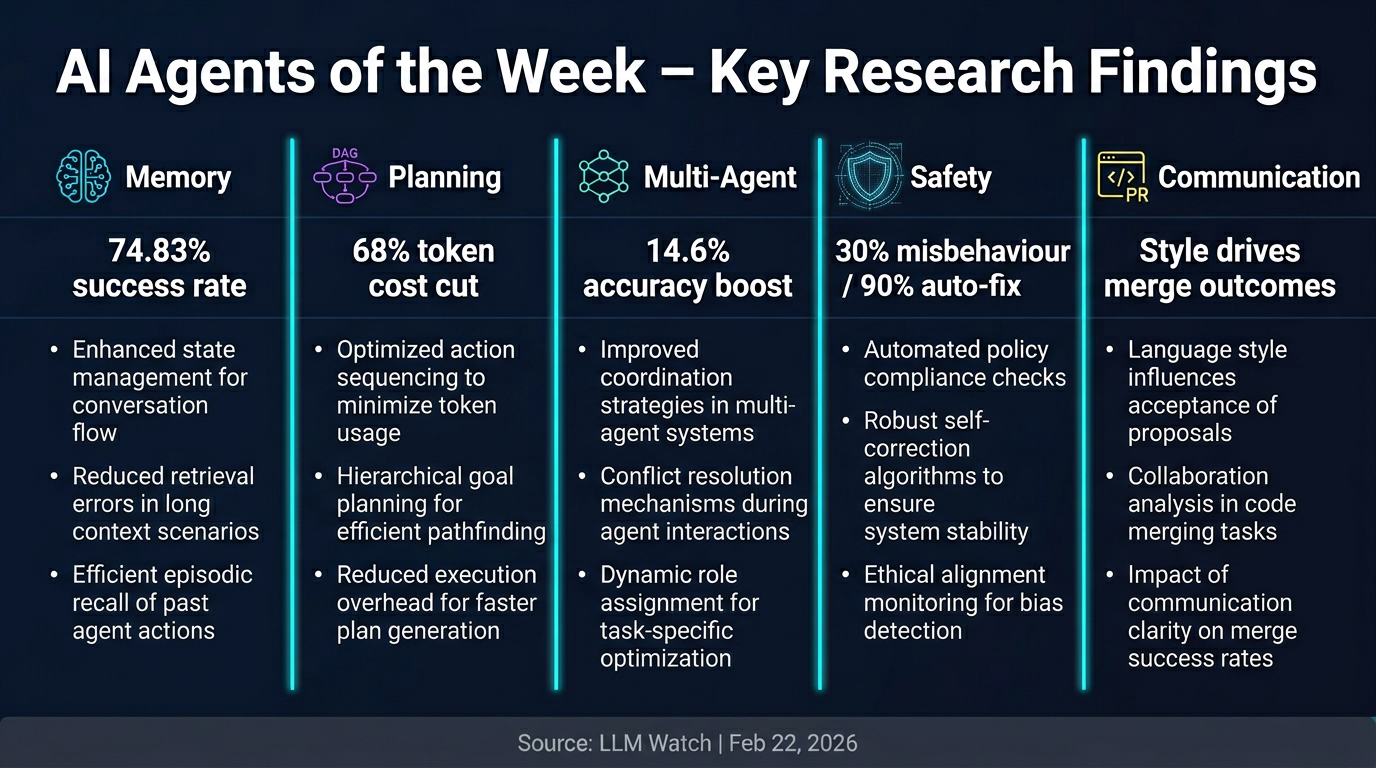

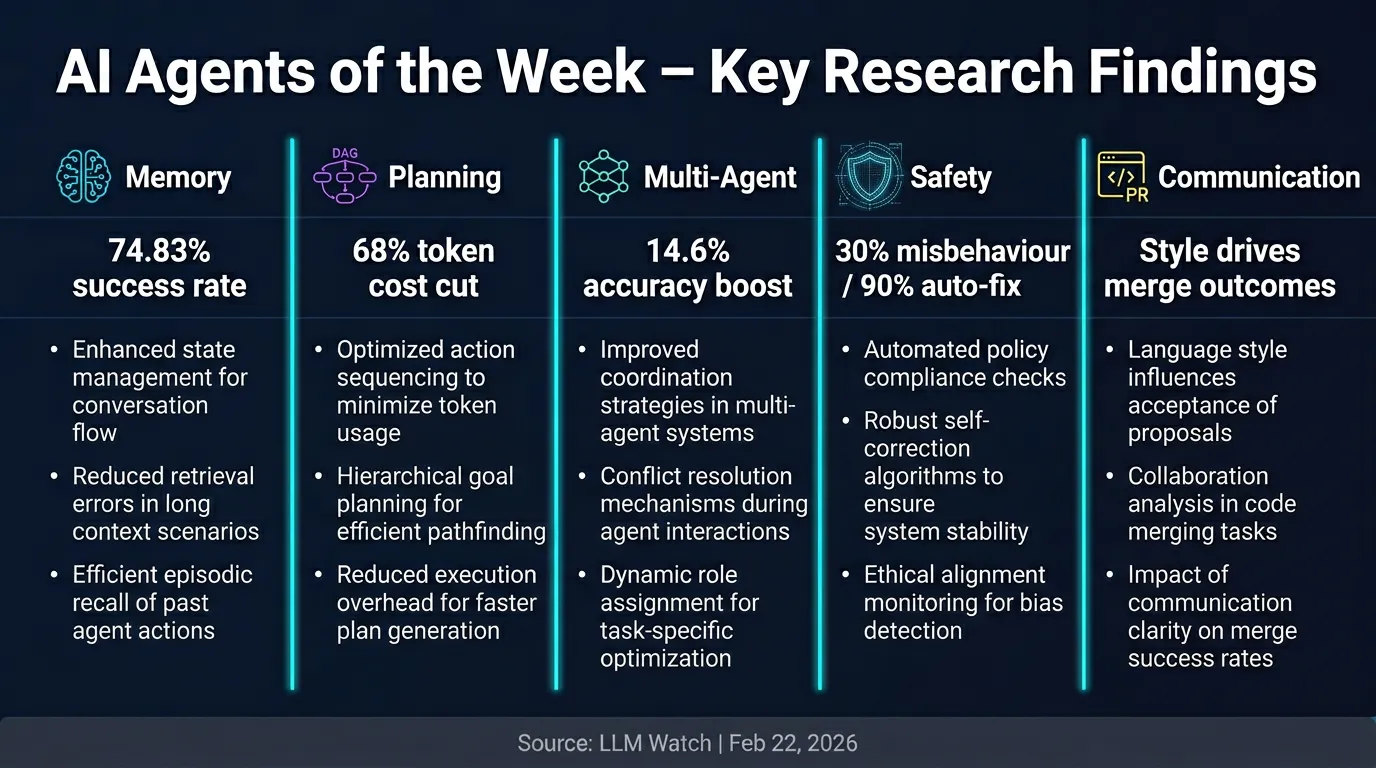

🧠 Memory & Continual Learning

- IntentCUA introduces intent-level representations that convert raw interaction traces into reusable skills.

- Achieves a 74.83% task success rate with a Step Efficiency Ratio of 0.91 on desktop automation tasks.

- Uses a coordinated Planner, Plan-Optimizer, and Critic sharing memory to stabilise long-horizon execution.

🗺️ Planning & Environment Interaction

- AgentConductor uses reinforcement learning to evolve multi-agent communication topologies dynamically.

- Delivers up to 14.6% improvement in pass@1 accuracy over baselines for code generation.

- Density-aware layered DAG construction reduces token costs by 68% — a major efficiency win for compute-constrained deployments.

🤝 Multi-Agent Collaboration & Control

- AgentConductor shows that adapting topology to task difficulty outperforms fixed communication graphs, with 13% density reductions alongside accuracy gains.

- AutoNumerics applies multi-agent orchestration to scientific computing, autonomously designing and verifying PDE solvers across 24 canonical problems.

- Key insight: the architecture of agent collaboration matters more than individual agent capability.

🔒 Trust, Verification & Safety

- Wink is a production-deployed system for recovering from coding agent misbehaviours.

- Found that ~30% of all agent trajectories contain misbehaviours: Specification Drift, Reasoning Problems, or Tool Call Failures.

- Lightweight self-intervention resolves 90% of single-intervention misbehaviours and reduced engineer interventions in live A/B testing.

- CowCorpus provides a taxonomy of human intervention patterns, enabling models to predict user interventions with a 61.4–63.4% improvement over baselines.

🛠️ Tools & Frameworks in Practice

- How AI Coding Agents Communicate analyses pull requests across five AI coding agents.

- Finds that presentation style correlates with reviewer engagement and merge outcomes — agents that communicate clearly get their PRs merged more often.

Practical Takeaways

- Build for long horizons: Intent-level memory abstraction (IntentCUA) is a viable path to more reliable long-running agents.

- Dynamic topology > static graphs: Fixed multi-agent communication structures leave significant performance and cost on the table.

- Expect ~30% misbehaviour rates: Production agent systems need built-in recovery mechanisms, not just prevention.

- Human-in-the-loop is predictable: Models can now anticipate when humans will intervene, enabling proactive agent self-correction.

- Agent communication style matters: How an agent explains its work affects real-world outcomes like code review acceptance.