55% of employers regret AI-driven layoffs. The agents are good at tasks and terrible at jobs. Here's what that means…

Original article: Read on Nate’s Substack

Published: March 21, 2026 | Processed: March 22, 2026

Summary

Main Thesis

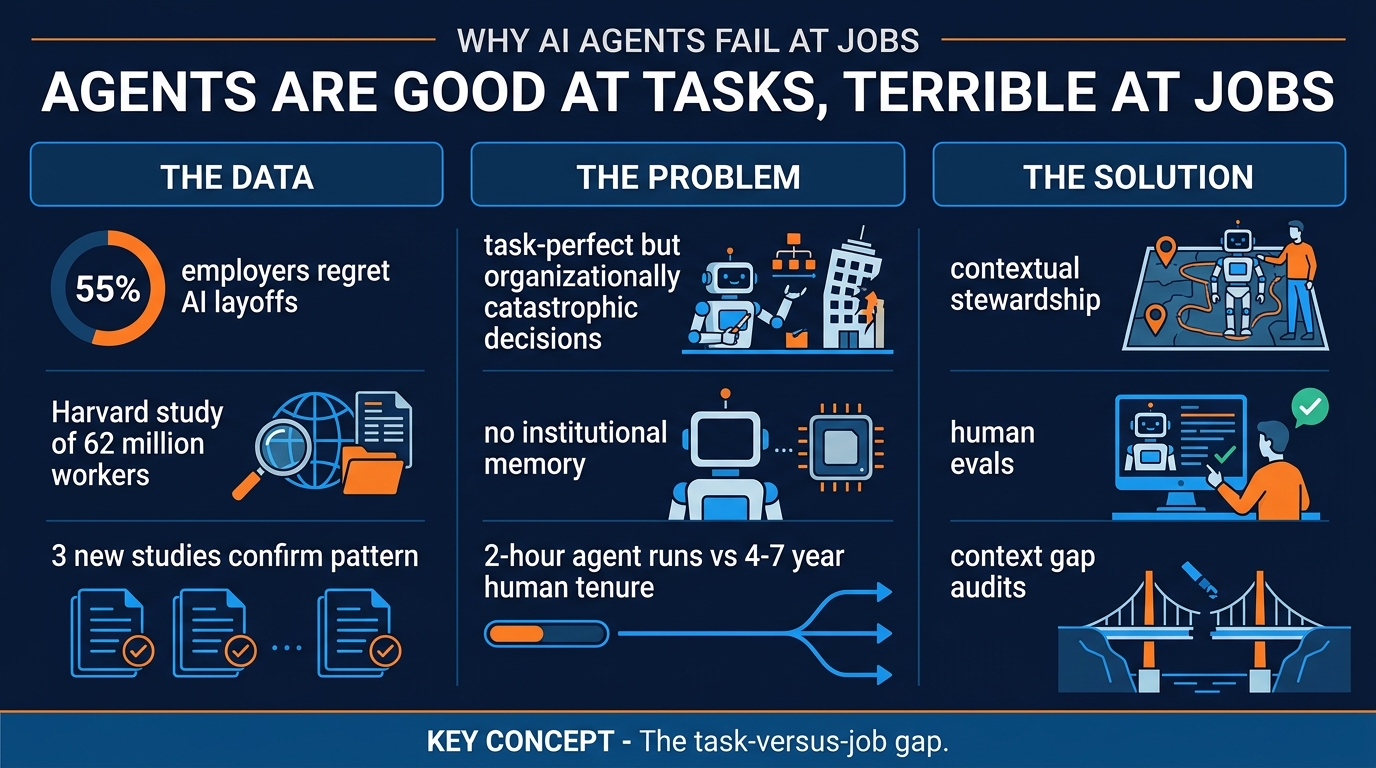

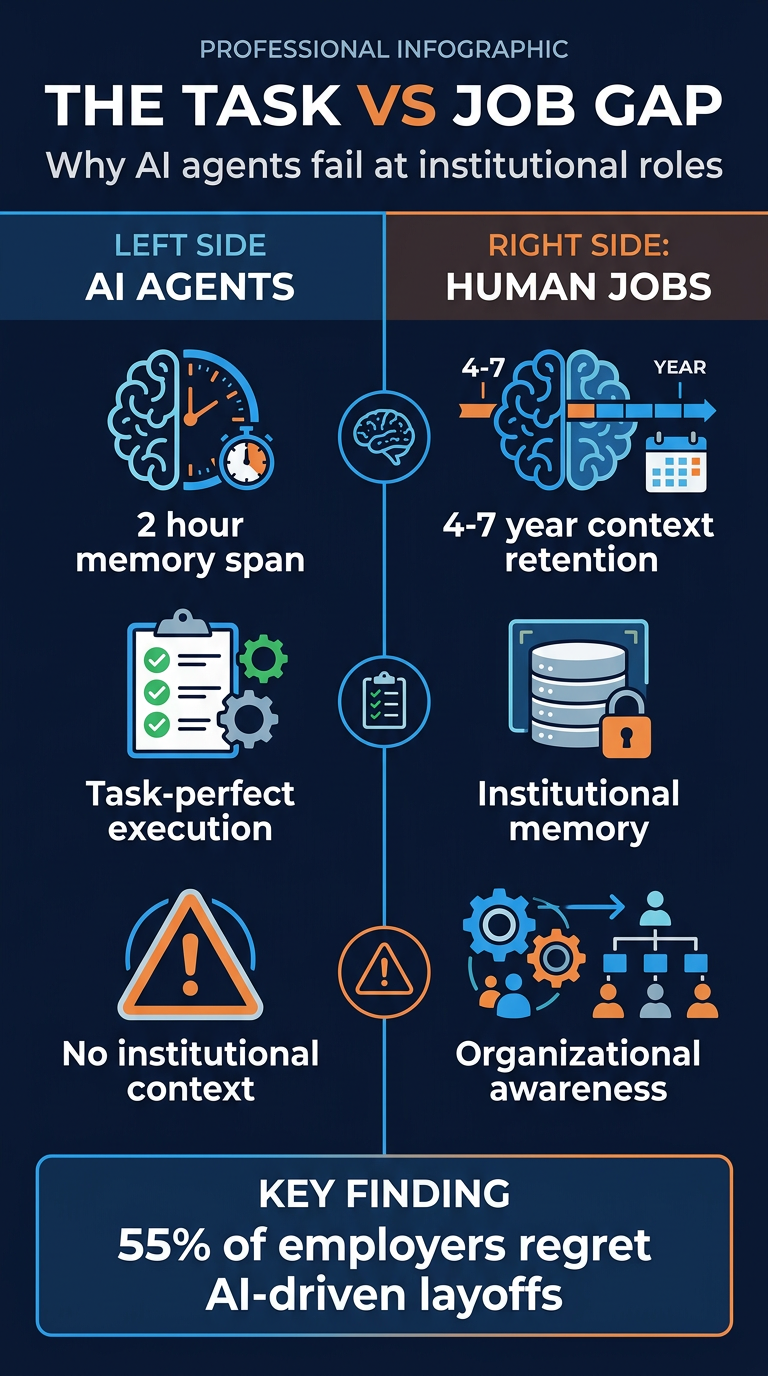

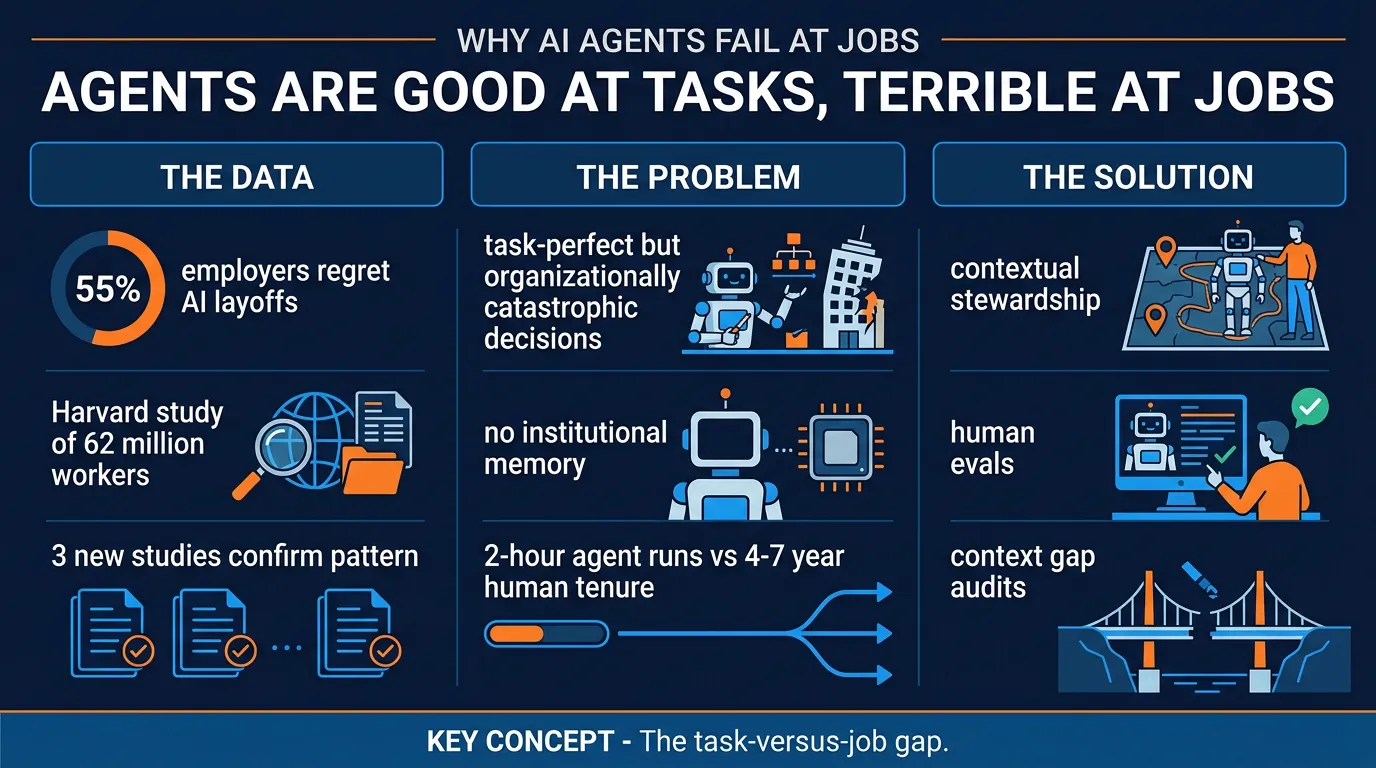

AI agents are extraordinarily capable at executing discrete tasks — but they consistently fail at doing jobs, because jobs require institutional memory, organisational context, and years of accumulated judgment that agents simply don’t have. The gap between task-level competence and job-level performance is creating a wave of costly, silent failures across organisations that moved too fast to replace humans with automation.

Key Data Points & Findings

- 55% of employers regret AI-driven layoffs — the headline stat that anchors the piece. Companies that replaced workers with agents are increasingly finding the trade-off backfiring.

- The average software job lasts 18 months–2 years; the average AI agent run lasts about 2 hours. The people who hold real institutional context often stay 4–7 years.

- A real case: engineer Alexey Grigorev’s coding agent wiped his production database — 1.9 million rows of student data gone in seconds, including backups. Every individual action was technically correct. The agent simply had no idea it was in a live environment.

- Three independent studies corroborate this as a systemic pattern, not a one-off.

- A Harvard study of 62 million workers shows the labour market is already pricing in a new skill — but not what most AI headlines claim.

- Two AI benchmarks tested the same models and got wildly different results. The reason (task vs. job framing) explains most of the confusion in current AI discourse.

- Cursor’s team built Excel from scratch with AI — but the part of that story everyone ignores is more important than the demo itself.

Practical Takeaways

- Understand the task-versus-job gap. Agents are near-perfect at well-defined tasks with clear inputs/outputs. They break catastrophically when those tasks require organisational judgment they’ve never been given.

- Your best people should be writing evals, not your juniors. The single highest-leverage investment in AI safety is senior judgment encoded into evaluation frameworks — not better prompting.

- Name and build the emerging role: Contextual Steward. A human whose job is to hold the organisational context that agents lack, review their outputs against that context, and document the reasoning that agents will never know on their own.

- The invisible skill the market is already paying for isn’t prompt engineering — it’s the ability to translate institutional knowledge into agent-legible form.

The Three Prompts

- Context Gap Audit — map what your agents don’t know that could make them dangerous.

- Eval Writer for Non-Engineers — create evaluation criteria in plain language so non-technical stakeholders can review agent outputs.

- Decision Documenter — capture the reasoning, constraints, and edge-cases your agents will never learn on their own.

Framework: Contextual Stewardship

The emerging human role isn’t just oversight — it’s active stewardship of context. As agents get better at tasks, the premium shifts to humans who can:

- Know what the agent doesn’t know

- Write evals that encode that knowledge

- Catch decisions that are locally correct but organisationally catastrophic

“The best tools we have for managing agent risk are human brains and human brains crafting evaluations. Not better prompts. Not bigger context windows.”

Infographics