5 AI agents, 5 contradictory bets, 3 questions that tell you which one fits — and the prompts to pressure-test your…

5 AI Agents, 5 Contradictory Bets — Nate’s Substack Summary

Main Thesis

The AI agent landscape is a high-stakes product strategy case study. Every major company responding to the rise of OpenClaw (described as the most consequential AI provocation since ChatGPT) made a fundamentally different strategic bet on the same set of tradeoffs. The divergence in their responses — not the horse-race coverage — is the real story worth studying.

Key Context & Market Signals

- OpenClaw emerged from a hobby project with a lobster emoji and triggered a wave of responses within four months

- Nvidia compared it to Linux

- OpenAI brought its creator on board

- Meta spent $2 billion on Manus

- Perplexity shipped a $200/month cloud agent, then quickly added a local agent on a dedicated Mac — acknowledging cloud alone wasn’t sufficient

- Anthropic shipped Dispatch

- Shenzhen’s government began subsidizing companies building on OpenClaw

- 1,000 people lined up outside Tencent HQ for installation — then paid to have it removed, dubbed the “stupidity tax”

- Lovable ($400M ARR, $6.6B valuation) — the most copied AI product of 2025 — is expanding beyond app building into general-purpose agent tasks

The Three-Axis Framework

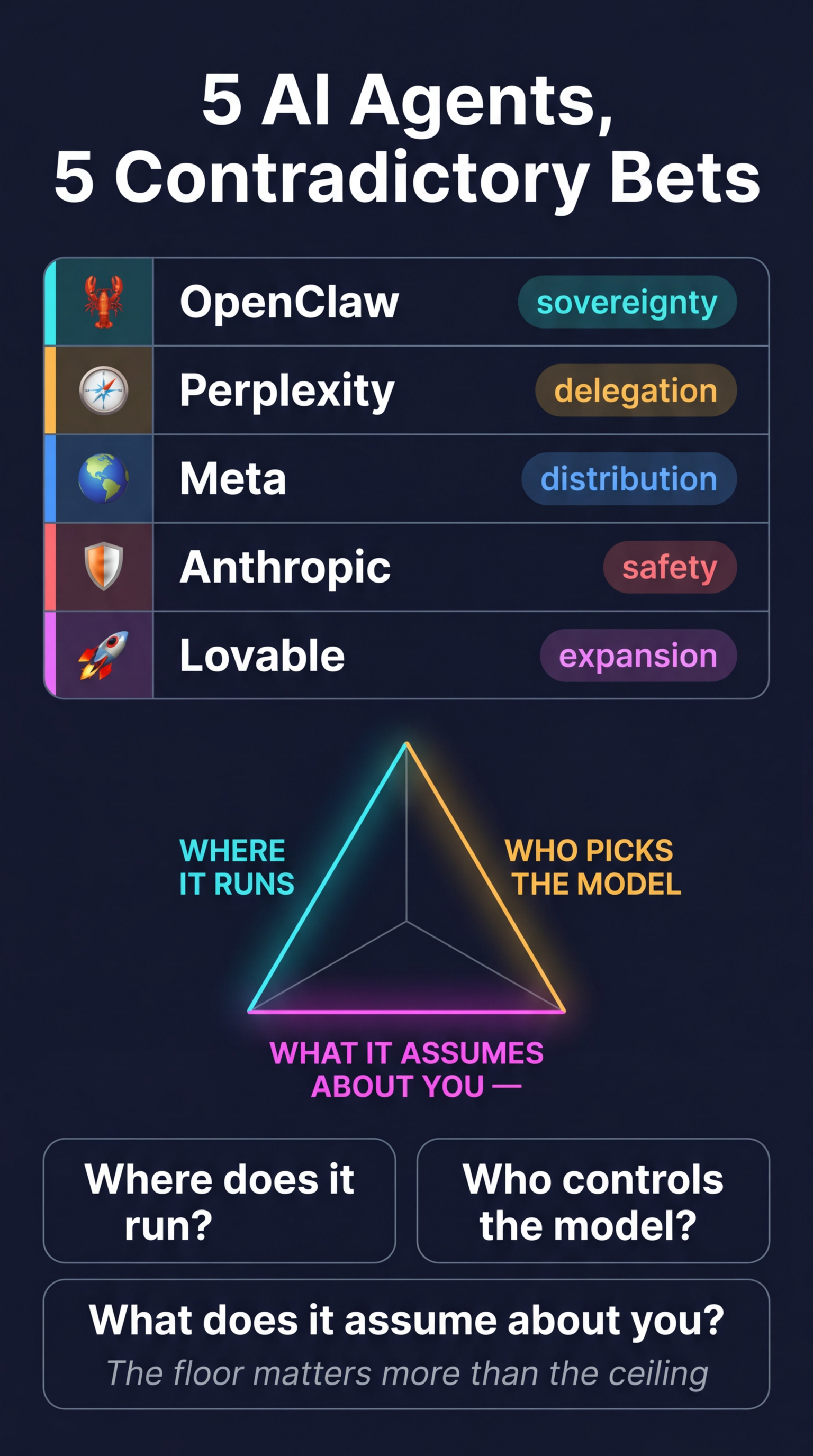

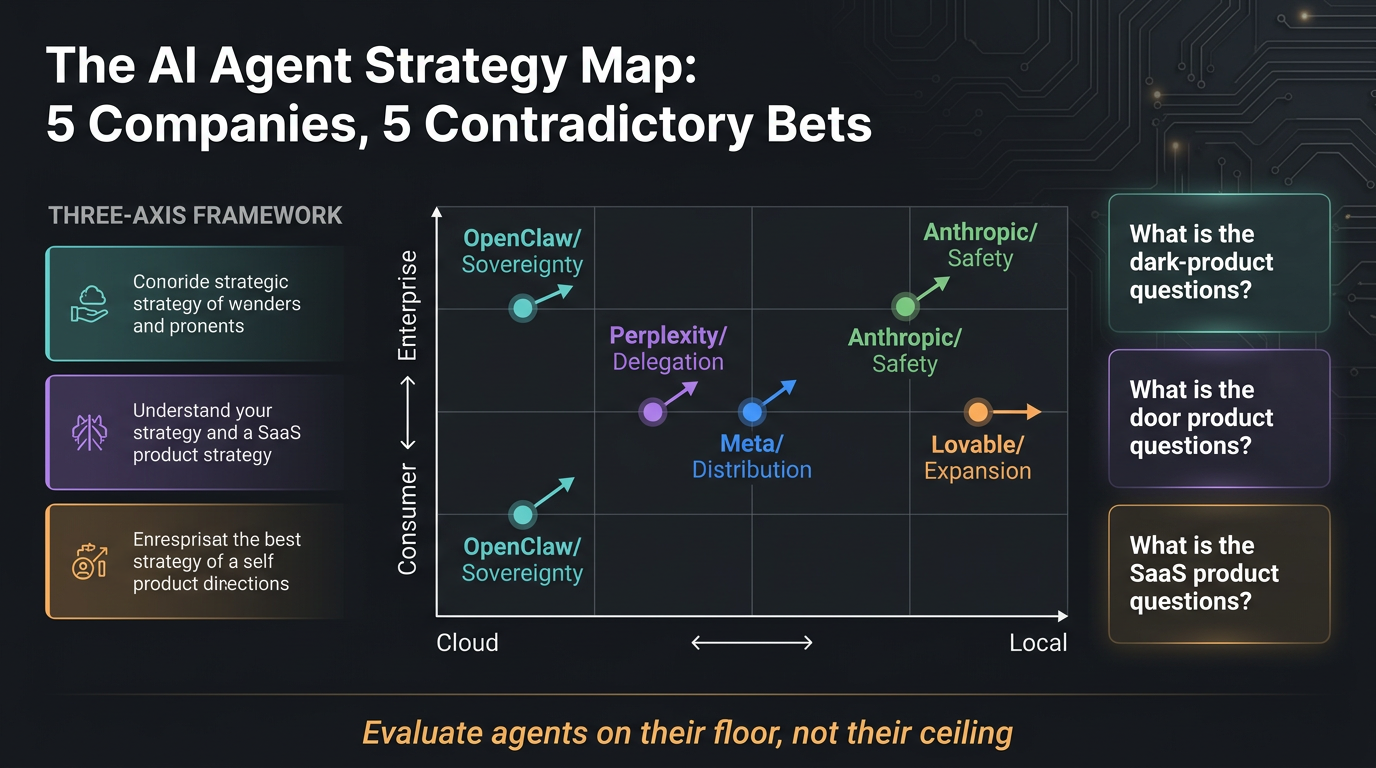

Nate introduces an evaluation lens applicable to every agent product:

- Where the agent runs — cloud vs. local

- Who picks the model — the vendor or the user

- What the interface assumes about you — technical sophistication, delegation comfort, trust level

Five Strategic Plays Dissected

| Company | Bet |

|---|---|

| OpenClaw | Sovereignty — user owns the stack |

| Perplexity | Delegation — abstract away complexity |

| Meta | Distribution — win via scale and ecosystem |

| Anthropic | Safety positioning — enterprise trust first |

| Lovable | Expansion — pivot from vertical tool to general agent |

Key Frameworks & Takeaways

- Three questions to evaluate any new agent product (paywalled detail, but framed around the three axes above)

- The floor matters more than the ceiling — don’t evaluate agents on their best-case demos; evaluate their worst-case reliability

- A product strategy landscape map shows where each agent sits and what it means for developers, knowledge workers, enterprise buyers, and product builders

- Lovable’s pivot is called the subplot that should be taught in business schools — even category-defining products feel the gravitational pull of the agent wars

Practical Tools Offered (Paid)

- Agent selection advisor prompt — prevents hype-driven choices

- Compression stress-test for product builders

- Reusable three-axis evaluator prompt for every future agent launch

Bottom Line

The agent wars aren’t about which AI is smartest. They’re about where computation lives, who controls model choice, and what level of trust the product assumes. Understanding those three axes lets you cut through launch hype and make principled decisions about which agent actually fits your workflow or product strategy.